There currently exists no machine capable of superhuman feats of intelligence. I’m sorry to be the one who bursts your bubble but, at least for the time being, that’s the way the universe works.

It might seem like AI is smarter than humans in some ways. For example, the powerful neural networks used by big tech can sort through millions of files in a matter of seconds, a feat that would take humans more than a single lifetime.

But that’s not a superhuman feat of intellect. It’s trading human attention for machine speed. On a file per file basis, there is no attention-based task a machine can beat a human at barring chance.

Let’s use computer vision as an example. You can train an AI model to recognize images of cats. If you have 10 million images and you need to know approximately how many of those have cats in them, the AI should be able to do in seconds what would take humans many years to accomplish.

However, if you took 100 school-aged children and had them look at 100 images to determine which images had cats, no neural network in existence today could consistently outperform the children.

The tradeoff

In this particular task, we can point to the fact that AI struggles to identify subjects when the subject isn’t posed in a typical pattern. So, for example, if you dressed a cat up like a clown, the average child would still understand that it’s a cat, but an AI that wasn’t trained on cats dressed up like other things might struggle or fail at the task.

The tradeoff we’re talking about, when you sacrifice accuracy for speed, is at the crux of the problem with bias in AI.

Imagine there were a statistically significant number of images in our deck of 10 million that depicted cats dressed like clowns and the model we were using struggled with costumed felines.

The cats-dressed-as-clowns might be in the minority of cat images, but they’re still cats.

This means, unless the model is particularly robust against clownery, the entire population of cats dressed like clowns is likely to be omitted from the AI’s count.

Of course, it would be trivial to train an AI to recognize cats dressed as clowns. But then there’ll be another edge-case you’ll have to train the AI for, and one after that.

A human child doesn’t suffer from the same problem. You can dress a cat up any way you want, and as long as you haven’t occluded the cat’s identifying features, the kid’s going to understand it’s a cat in a costume.

This problem, at scale, is exactly why driverless cars are still not available outside of well-regulated tests.

Machine superiority

Machine superiority could be described as a paradigm in which computers know what’s best for humans and have the ability to control us through non-violent means.

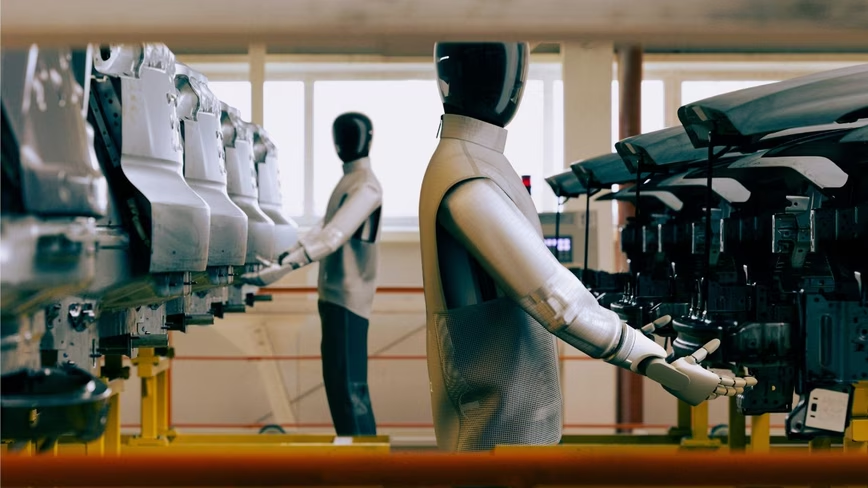

If legions of killer robots suddenly travel back in time to subjugate us with laser guns, that’s a different kind of superiority — one that’s beyond the scope of this non-fiction article.

The big idea behind machine superiority is that one day, we’ll create an AI that’s so intelligent it surpasses humans in its ability to reason, plan, and adapt.

The reason it’s such an attractive concept is two-fold:

- It imagines a future where humans have finally solved all the world’s problems… by inventing a magic box to solve them for us.

- We can tell scary “what if” stories about what would happen if the magic box was evil.

But currently, there exists no machine that can outperform a typical human at any given task, with the exceptions of digital speed and chance.

When humans and machines are given a task that involves occluded information — for example, playing chess or determining whether someone is a democrat or republican by looking at a picture of their face — the ability to predict the next segment in a linear output can often trump intelligence.

Tools of the trade

Here’s what I mean: it doesn’t take intelligence for DeepMind to beat humans at chance or Go, it takes a strict adherence to probability and the ability to predict the next move. A human can look at a chess board and imagine X amount of moves in a given amount of time. An AI can imagine, theoretically, all the possible moves in a given amount of time.

That doesn’t mean the AI isn’t one of the most incredible feats of computer science humanity has ever pulled off, it just means that it doesn’t have a superhuman level of intelligence — it’s actually nowhere near human-level.

And there’s no human in the world who can look at another person’s face and determine their politics. We can guess, and AI can often guess more accurately than we do. But people’s faces don’t magically change; their politics often do.

A good way to look at artificial intelligence is to think of it as a tool. It’s easier for a human to chop down a tree with an ax than it is without one, but it’s impossible for an ax to do anything without a human. The ax has superhuman resilience and tree-chopping skills, but you wouldn’t call it superhuman.

An AI that can spot cats in images really fast is a good tool to have if you ever need to spot a cat, but it’s not something you’d refer to as superhuman. The same goes for one that can beat us at games.

Machines are great at certain tasks. But we’re a long ways away from the emergence of a supreme digital being.

We’re going to have to figure things out for ourselves, at least for the time being. Luckily, we have AI to assist us.

Neural’s “A beginner’s guide to AI” series began way back in 2018, making it one of the longest-running series on the basics of artificial intelligence around! You can check out the rest below:

- A beginner’s guide to AI: Neural networks

- A beginner’s guide to AI: Natural language processing

- A beginner’s guide to AI: Computer vision and image recognition

- A beginner’s guide to AI: Algorithms

- A beginner’s guide to AI: Human-level machine intelligence

- A beginner’s guide to AI: The difference between video game AI and real AI

- A beginner’s guide to AI: The difference between human and machine intelligence

- A beginner’s guide to AI: Ethics in artificial intelligence

Get the TNW newsletter

Get the most important tech news in your inbox each week.