Microsoft might have just made the best and easiest camera app for the iPhone and iPad.

That’s a fairly bold claim, but there’s real some substance behind it, and we’ve been able to try it out briefly beforehand. It’s called Microsoft Pix.

Here’s the gist of it: You take an image, and Microsoft uses artificial intelligence to dynamically adjust your camera settings to best fit the scene.

There are no exposure controls, no HDR-specific mode, no settings whatsoever. Just point and shoot, and all the processing immediately.

Microsoft says Pix works as if your photos were taken and chosen by a professional photographer. I just so happen to be a professional photographer, and, well, I’m inclined to agree (if professional photographer used smartphones, that is).

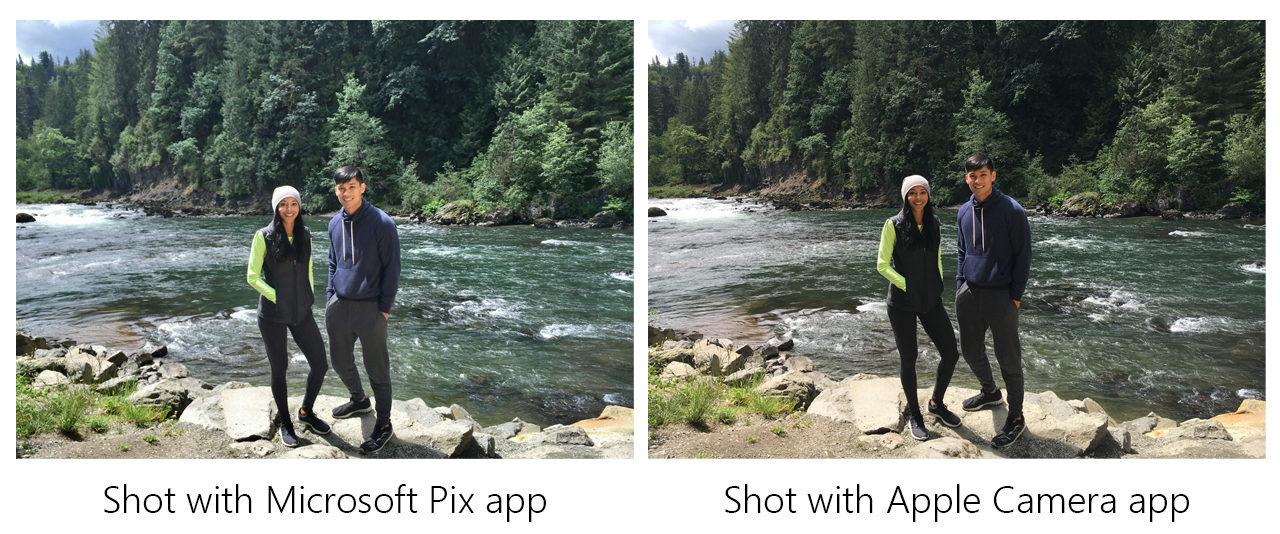

One of the most common complaints I see from iPhone users – and smartphone photographers in general – is trouble with scenes with heavy backlighting (where your subject is surrounded by a brighter object or environment behind them). Think of someone indoors standing in front of a window on a bright day, or a silhouette during sunset.

For example, here’s one sample scene shot with the standard iOS camera:

And here’s one taken with Microsoft Pix, no adjustments necessary:

Note how Pix isn’t simply making the entire image brighter, which you could do on your own using the iOS app. Instead it’s selectively brightening the parts of the image that need to be brightened. That’s something that would normally take you a couple of minutes to do in editing software, especially with four different people.

Pix detects there are actual humans in the shot, decides that’s the most important part of the image, and adjusts exposure, contrast and HDR settings in order to properly expose them without completely blowing out the highlights. All this processing is happening locally, by the way, not in the cloud.

The point is simplifying photography down to its just shutter button. This is aided by the camera’s clever ‘best shot’ mode pick the best shot out of a burst (bet you never would’ve guessed that one). It’s far from the first app to do this, but it’s the smartest implementation I’ve seen.

The camera is actually always taking photos while the app is open – before you even press the button – so it can salvage a shot if you’re too late or early to shutter. It then tries to pick the best image based on a number of parameters. Are everyone’s eyes open? Are your subjects smiling? Did they make a cool pose or motion?

The AI calculates these factors and chooses the best out of a set of 10 frames. Depending on the image, it might offer more than one best shot if the scenes are different enough.

Another benefit to this is that you don’t fill up your camera roll trying to get the right moment. Since Pix is always taking photos, instead of sifting through a long burst, you’re offered no more than three worthwhile shots, and only if there’s something significantly different about them.

The camera also uses its always-shooting system to help reduce noise. Aside from the standard processing in your iPhone, the camera mashes up the 10 frames it saves when you take a photo to detect and reduce noise, (although we weren’t able to try out this aspect in depth ourselves)

Perhaps the best example of the ingenuity here, however, is with Live Images, Microsoft’s take on Apple’s Live Photos. Instead of the blurry mess of the average Live Photo, Microsoft automatically trims the images based on what it thinks you actually care about (e.g., not your shoes as your return your phone to your pocket). You also don’t need to turn the feature on and off to save space – Pix automatically creates Live Images only when it thinks you might want them.

Moreover, the app uses tech from Microsoft’s own Hyperlapse to stabilize your live images. It’s smart enough to know when you’re purposefully panning the camera, for example, versus when you’re trying to keep the camera stable to capture a subject. In the latter scenario, it pretty much looks like you’re holding your phone on a tripod.

In other scenarios, the app basically creates a cinemagraph:

Speaking of hyperlapses, Microsoft is also including that video feature on iOS for the first time via Pix (a separate Hyperlapse app is already available on Android and Windows Phone). It works for both new and old videos, and you can also use the video function to record super-stable videos in real-time as well. Which again, works a lot better than the iOS implementation by default in my testing.

Here’s a sample video from Microsoft using the default camera an iPhone 6S on a dual-phone rig:

And here’s that same recording on an adjacent iPhone 6S, using Pix:

It’s pretty obvious which one is better. And sure, you can stabilize the iPhone video later, but that takes away from the simplicity of it all.

Pix is definitely not always perfect, mind you. Sometimes having everything well-exposed can make an image less punchy. Sometimes the iOS camera seems to offer slightly better colors. Those are small quibbles and not the norm.

For me, the biggest issue is that while it makes the average shot better, the lack of virtually any controls feels a bit limiting for those who try to get a bit more artsy. What if you purposefully want your subject to be underexposed for a cool silhouette?

Though I can always just use another app when I need more power, I’d like to see a manual mode that still pulls in AI features, but gives me more control when I need it. As it stands, the only changes you can really make are some basic edits and filters after a shot has been recorded.

Still, at least it gives you a better starting point for later edits, and in any case, I’m not really who Pix is really being aimed at right now. This is for the person that just opens their camera app and starts shooting, the ones who probably never touch the HDR setting or exposure controls at all in the default camera app.

If that’s you, you’ll want to give Pix a try. It works on everything from the iPhone 5S or iPad Air and beyond, and you can download it from the App Store now.

(And don’t worry team Google, an Android version is coming soon.)

Get the TNW newsletter

Get the most important tech news in your inbox each week.