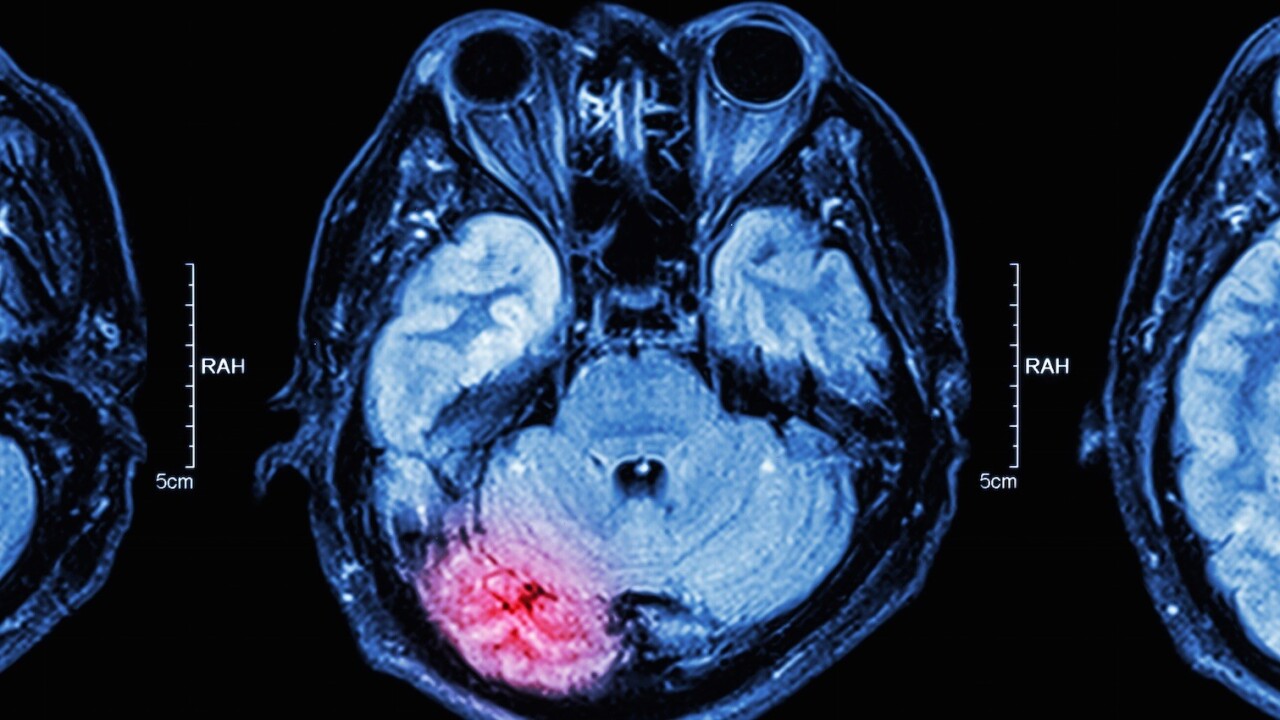

What if hackers could one day control your mind, or at least take a glimpse inside, through the use of sophisticated malware?

It’s a scenario that’s sounds straight out of a science fiction novel, but we’re far closer than you might think. As scientists continue to make breakthroughs in implanting, and controlling, brain-computer interfaces we’re actually on the verge of this being reality, a realization researchers need to act fast on to ensure our brain signals aren’t used against us.

According to University of Washington Biorobotics Lab engineer Howard Chizeck:

“There’s actually very little time. If we don’t address this quickly, it’ll be too late.”

Strictly speaking, brain control is already here. Doctors are using it in a medical setting, game developers are using it for more precise control (without controllers) and even the US military is experimenting with the technology. It’s not a matter of if someone can control you using nothing but a computer, but when.

Controlling a body part, or an entire human involves tapping into brain signals — such as EEG (electroencephalography) — and manipulating them to complete a desired action (such as this guy moving bionic fingers after a brain implant). The problem with the (relative) simplicity of bionics and human control through computers is that we haven’t yet figured out how to give computers access to just the brain waves they need, while blocking out the rest.

Fellow Biorobotics Lab engineer Tamara Bonaci explains:

“Broadly speaking, the problem with brain-computer interfaces is that, with most of the devices these days, when you’re picking up electric signals to control an application… the application is not only getting access to the useful piece of EEG needed to control that app; it’s also getting access to the whole EEG. And that whole EEG signal contains rich information about us as persons.”

It’s not just hackers we have to worry about, either. “You could see police misusing it, or governments—if you show clear evidence of supporting the opposition or being involved in something deemed illegal,” suggested Chizeck. “This is kind of like a remote lie detector; a thought detector.”

While engineers are working on the problem, it’s clear that a temporary fix could be achieved through legislation — although it’s not all that likely that hackers are going to start abiding by laws anytime soon.

A more permanent fix, and one the University of Washington team is considering is a “BCI Anonymizer,” a tool the team compared to a smartphone app in that it would receive limited information it needs to run, but wouldn’t be able to access the device’s operating system. It’d effectively become a filter that would allow needed brainwaves to pass while blocking others.

Chizeck says the lab is currently running more tests to see if the BCI Anonymizer is feasible, and what level of filtering it offers.

Again, we could be decades away from this type of scenario, but we’re plausibly within a 10-year timeline of more advanced implants being capable of controlling more advanced motor function, thought and possibly even speech. By doing the work now, the team could be saving us from a particularly catastrophic failure of human-machine cooperation in the not-so-distant future.

Get the TNW newsletter

Get the most important tech news in your inbox each week.