The first known use of the word “computer” was in 1613 in a book called The Yong Mans Gleanings by English writer Richard Braithwaite. In it he said, “I haue read the truest computer of Times, and the best Arithmetician that euer breathed, and he reduceth thy dayes into a short number.” (The spelling mistakes were all deliberate BTW, it was a simpler time back then).

Braithwaite was referring to a person who carried out calculations, or computations. Today, most of that work is handed over to the metal and plastic slabs we have on our desk.

But how did a box of cables and circuits became so dang smart? Here we comb through the annals of history and the lesser known corners of computer programming to find 10 tidbits every computer programmer should know.

1. The first “pre-computers” were powered by steam

In 1801, a French weaver and merchant, Joseph Marie Jacquard, invented a power loom that could base the design of a fabric upon punched wooden cards.

Fast forward to the 1830s, and the world marvelled at a device large as a house and powered by six steam engines. It was invented by Charles Babbage, the father of computing – and he called it the Analytic Engine.

Babbage used punch cards to allow the monstrous machine to be programmable. The machine consisted of four parts: The mill (analogous to CPU), the store (analogous to memory and storage), the reader (input) and the printer (output).

It is the reader that makes the Analytic Engine innovative. Using the card-reading technology of the Jacquard loom, three different punched cards were used: Operation cards, number cards and variable cards.

![flat,1000x1000,075,f.u1[1]](https://media.thenextweb.com/2016/02/flat1000x1000075f.u11.avif)

Babbage sadly never managed to build a working version, because of ongoing conflicts with his chief engineer. It seems even back then, CEOs and devs didn’t get a long.

2. The first computer programmer was a woman

In 1843, Ada Lovelace, a British mathematician, published an English translation of an Analytical Engine article written by Luigi Menabrea, an Italian engineer. To her translation, she added her own extensive notes.

![Ada_Lovelace[1]](https://media.thenextweb.com/2016/02/Ada_Lovelace1.avif)

In one of her notes, she described an algorithm for the Analytical Engine to compute Bernoulli numbers. Since the algorithm was considered to be the first specifically written for implementation on a computer, she has been cited as the first computer programmer.

Did Lovelace go onto a life of Ted Talks (or whatever the Victorian equivalent was)? Sadly not, she died at the age of 36, but her legacy thankfully lives on.

3. The first computer “bug” was named after a real bug

While the term ‘bug’ in the meaning of technical error was first coined by Thomas Edison in 1878, it took another 6o years for someone else to popularize the term.

In 1947, Grace Hopper, an admiral in the US Navy, recorded the first computer ‘bug’ in her log book as she was working on a Mark II computer.

A moth was discovered stuck in a relay and thereby hindering the operation. Before it was recorded on her note, the moth had been ‘debugged’ from the system.

On her note, she wrote, “First actual case of bug being found.”

![09September_1[1]](https://media.thenextweb.com/2016/02/09September_11.avif)

4. The first digital computer game never made any money

What is considered as the forefather of today’s action video games and the first digital computer game wasn’t particularly successful.

In 1962, a computer programmer from Massachusetts Institute of Technology (MIT), Steve Russell, and his team took 200 man-hours to create the first version of Spacewar.

Using the front-panel test switches, the game allowed two players to take control of two tiny spaceships. It became your mission to destroy your opponent’s spaceship before it destroyed you.

In addition to avoiding your opponent’s shot, you also had to avoid the small white dot at the centre of the screen, which represents a star. If you bumped into it, boom! You lost the battle.

Russell wrote Spacewar on a PDP-1, an early Digital Equipment Corporation (DEC) interactive mini computer which used a cathode-ray tube display and keyboard. Significant improvements were made later in the spring of 1962 by Peter Samson, Dan Edwards and Martin Graetz.

Although the game was a big hit around the MIT campus, Russell and his team never profited from the game. They never copyrighted it. Besides, they were hackers who wanted to do it to show their friends. So they shared the code with anyone who asked for it.

5. The computer virus was initially designed without any harmful intentions

In 1983, Fred Cohen, best known as the inventor of computer virus defense techniques, designed a parasitic application that could ‘infect’ computers. He defined it as computer virus.

This virus could seize a computer, make copies of itself and spread from one machine to another via a floppy disk. The virus itself was benign and only created to prove that it was possible.

Later he created a positive virus called compression virus. This virus could be written to find uninfected executables, compress them upon the user’s permission and attach itself to them.

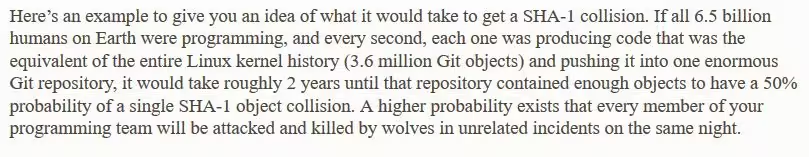

6. There is a higher chance of you getting killed by wolves than having an SHA-1 collision in Git

A popular distributed revision control, Git, is using a Security Hash Algorithm 1 (SHA-1) to identify revisions and detect data corruption or tampering.

In data management, a commit is making a set of tentative changes permanent. One of the ways how Git allows its users to specify a commit is by using a short SHA-1.

In its short note regarding SHA-1, Git reported that a lot of people become concerned that at some point, they would have two objects in their repository that hash to the same SHA-1 value. This instance is what they call an SHA-1 collision.

Below is Git’s note in regards to SHA-1 collision:

7. If computer programming were a country, it would be the third most diverse for languages spoken

Papua New Guinea has approximately 836 indigenous languages spoken, making it the number one country when it comes to linguistic diversity. Second on that list is Indonesia, with more than 700 and Nigeria, with over 500 indigenous languages.

All notable programming languages known to man, both those in current use and historical ones, amount to 698 languages. If it were a country, computer programming would be in bronze medal position. We don’t recommend you try and learn them all.

8. A picture from Playboy magazine is the most widely used for all sorts of image processing algorithms

The image of Lena Söderberg is a standard test image widely used in the field of image processing since 1973. The image was cropped from the centerfold of November 1972 issue of Playboy magazine.

![Lenna[1]](https://media.thenextweb.com/2016/02/Lenna1.avif)

The editor-in-chief of Institute of Electrical and Electronics Engineers (IEEE) Transactions on Image Processing, David Munson, gave two reasons why the image is so popular:

a. It contains a nice mixture of detail, flat regions, shading and texture that do a good job of testing the capabilities of any image processing software.

b. Lena’s image is a picture of an attractive woman. It is not surprising that the (mostly male) image processing research community are inclined to use the image.

Want to know another amazing fact?

Right now, you can get three computer programming-related bundles with crazily discounted price on TNW Deals:

1. The Complete Raspberry Pi 2 Starter Kit (85 percent off)

2. The Complete 2015 Learn to Code Bundle (94 percent off)

3. Python Programming Bootcamp (96 percent off)

Get the TNW newsletter

Get the most important tech news in your inbox each week.