Following a recent decision by its CEO, Mark Zuckerberg, Facebook opted to give priority to posts from friends instead of public pages in the users’ News Feeds. The bombshell drove Facebook stock price down by five percent. This new turn for the social media company is even more significant that it seems, putting both media and community managers in a tricky situation.

Having had to play with the Facebook “black box” for many years — the black box allegory is used for platforms where the governing principles are not visible, nor comprehensible, to the user — they are now facing yet another unknown, with new challenges and new stakes.

This ideology-oriented News Feed shift could compromise the relationship between Facebook and its partners, while turning the social media platform into a perfect nest for growing fake news narratives.

The failure of Facebook’s algorithm

The turning point Facebook has reached is confessing to the failure of its algorithm. If it was indeed as strong and powerful as advertised, it should have easily ingested the masses of information surrounding us and digest it, spitting out relevant content.

But, according to various websites (e.g. Le Blog du Moderateur), crucial key performance indicators are under-performing on Facebook. Between 2016 and 2017, the average time spent on the platform dropped from 32 hours and 43 minutes per month to 18 hours and 24 minutes per user. The average number of sessions opened per month per user dropped from 311 to 173. Only the average session duration increased… by five seconds.

Less sessions and less time spent on the platform mean less opportunity to be reached by advertisers. Less exposure to ads lead to less revenue. Ever if Facebook remains one of the big-shots in the advertising business, the social media giant needs to take these figures seriously. It won’t be able to rely on it’s predicted growth from 1,88 to 2,07 billion users much longer. Time spent on the platform and related KPIs will become more and more central as the user growth hits a ceiling.

Facebook underlying ideology: “Strong family and friendship links make content strong”

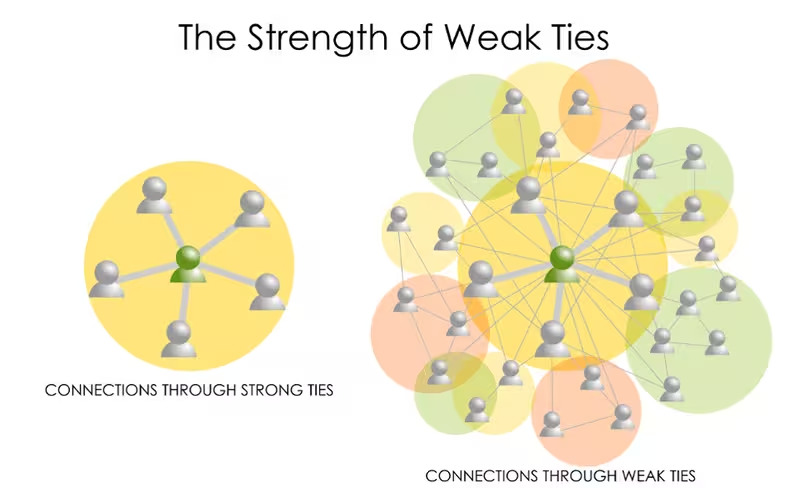

How come that one of the major companies owning terabytes of data relies on ideology instead of strong statistics analysis? Social network analysis and sociology emphasized the importance of weak links, proving a network’s strength relies on the strength of the weakest links, meaning the users that are on the edge of your filter bubble, that can connect you to another bubble.

But Facebook decided to follow another path. According to the company, spending time on its platform is not about discovering new content, but about maintaining close contact with your family and relatives, far from the vision of an open web full of lucky discoveries and closer to a more religious model: family prevails over everything else.

But Facebook decided to follow another path. According to the company, spending time on its platform is not about discovering new content, but about maintaining close contact with your family and relatives, far from the vision of an open web full of lucky discoveries and closer to a more religious model: family prevails over everything else.

Undermining Facebook’s own crusade against fake news

This shift of Facebook’s News Feed policy will also strengthen existing filter bubbles, limiting our capacity to pierce them. “Fake news,” even though it’s initially published on Facebook pages:

- Is spread by users. Sometimes even by people close to you. Boosting your relatives’ publications instead of Facebook pages won’t change things much.

- Has a low individual impact. Disinformation content originates from Facebook pages, managed indirectly by foreign states, but it doesn’t require a well-established page to be disseminated. All that is required is a proper network and suitable users. Professional fake news-spreaders can simply pay Facebook to target specific communities, without the need to worry about establishing a Facebook page to do so.

Facebook’s concept of suggesting related content is what we in France call “poudre de perlimpinpin” (pixie dust). Let’s assume the true purpose of fake news is to make you believe a 3-legged unicorn exists. As no serious media outlet has ever mentioned 3-legged unicorns, only similar fake news stories will be related to this fraudulent content and these will be suggested to you, strengthening the notion of the news being real.

This issue is already prominent in Google Search, as only conspiracy theorists talk about conspiracies, so asking Google about it will only return conspiracy websites. To make things worse, with no easily reachable content debunking the news and users reinforcing it, credible sources have an even harder job proving it is indeed fake.

The worst part is that Facebook’s efforts to actually deal with clickbait content once and for all are in vain by design. Even if algorithms can block “the third one will disgust you” and “like and share to win an iPhone” stories from the newsfeed, it is still human nature to prefer “snack content” to a full Wall Street Journal story.

The “I liked it so I want more” model is ineffective as long as we prefer engaging with trivial content rather than with quality journalism from credible sources. So however high-quality Facebook aims its content be it will always have to balance it with the preferences of the average user, or risk losing him otherwise.

Chasing the dragon

Not only users, but also traditional media are victims, always lagging behind. Chasing new technologies, chasing Google and now chasing social media platforms, the media is highly dependent on an ever-changing environment out of their control:

- For the majority, around 50 percent of their web traffic comes from social media, mostly from Facebook

- They use dedicated Facebook tools like Instant Articles to boost their publications

- They are granted funds by Facebook to conduct fake news fact-checking on the social platform. A true win-win situation for both parties, where media companies keep their reputation as official fact-checking entities while Facebook delegates responsibility by outsourcing a complex political and societal issue to trusted, external partners.

The mainstream media’s Stockholm syndrome

Some experts view this evolving relationship as accessing news through either the front or the back door. Before the dawn of social media, the only access to information was through the main door — the news outlets’ official website or a search engine. Nowadays the prevailing access channel is through the back door — from social media.

The main door equals visibility and credibility, as well as control over the featured content. Entering through it leads you first to the main hallway, with the main news right in front of you. But when accessing through the back door, the user can just as well land anywhere else — more likely reading the news most shared by his peers day than the most important. In other words, he can enter through the kitchen, or worse, the crapper.

This gradual evolution allowed Facebook to make highly-profitable deals with media companies and, in practice, lead them on a leash. First they were forced to post on their platform, then to grow an audience, followed by enriching their content with video and live broadcasts (as pointed out by Nicolas Bequet) and as the final step — with this News Feed update — they will have to pay for every publication. Not only to promote and target it, but also for relevant insights.

If their content is only shared privately between users, and not from their page, only Facebook will have access to that data, and not the page owner. Last but not least, we come back to the initial issue — from a societal perspective this mechanism will further reinforce filter bubbles and move us further away from the goal of an open society.

What next?

The underlying truth is that Facebook is playing with fire, for two reasons.

A hypothetical boycott of Facebook by the media would likely lead to a dramatic lowering of content quality. A social network fed mostly by MinuteBuzz and Topito is surely not Facebook’s ambition. But a media boycott is highly unlikely, considering what’s at stake, and even more so taking into account the history of that relationship with Google, for instance.

The major risk lies on the side of disinformation, or fake news. As we’re clearly seeing, the media and journalist lobby is particularly strong in Europe — the composition of the European Commission’s new High-Level Group on Fake News is a good indicator. This policy shift on Facebook’s part, contributing to promoting fake news, might therefore have other, unforeseen consequences. With strong influence from the media it’s easy to see policy developments directly pressuring Facebook.

The most extreme — and disastrous for Facebook — scenario, would be Europe-wide, free speech-threatening legislation comparable to that of Germany’s (the “NetzDG” law), where Facebook has 24h to remove hate speech or face up to €50m in fines.

This article was co-written by Martin Mycielski.

Get the TNW newsletter

Get the most important tech news in your inbox each week.