A recent analysis on the future of warfare indicates that countries that continue to develop AI for military use risk losing control of the battlefield. Those that don’t risk eradication. Whether you’re for or against the AI arms race: it’s happening. Here’s what that means, according to a trio of experts.

Researchers from ASRC Federal, a private company that provides support for the intelligence and defense communities, and the University of Maryland recently published a paper on pre-print server ArXiv discussing the potential ramifications of integrating AI systems into modern warfare.

The paper – read here – focuses on the near-future consequences for the AI arms race under the assumption that AI will not somehow run amok or takeover. In essence it’s a short, sober, and terrifying look at how all this various machine learning technology will play out based on analysis of current cutting-edge military AI technologies and predicted integration at scale.

The paper begins with a warning about impending catastrophe, explaining there will almost certainly be a “normal accident,” concerning AI – an expected incident of a nature and scope we cannot predict. Basically, the militaries of the world will break some civilian eggs making the AI arms race-omelet:

Study of this field began with accidents such as Three Mile Island, but AI technologies embody similar risks. Finding and exploiting these weaknesses to induce defective behavior will become a permanent feature of military strategy.

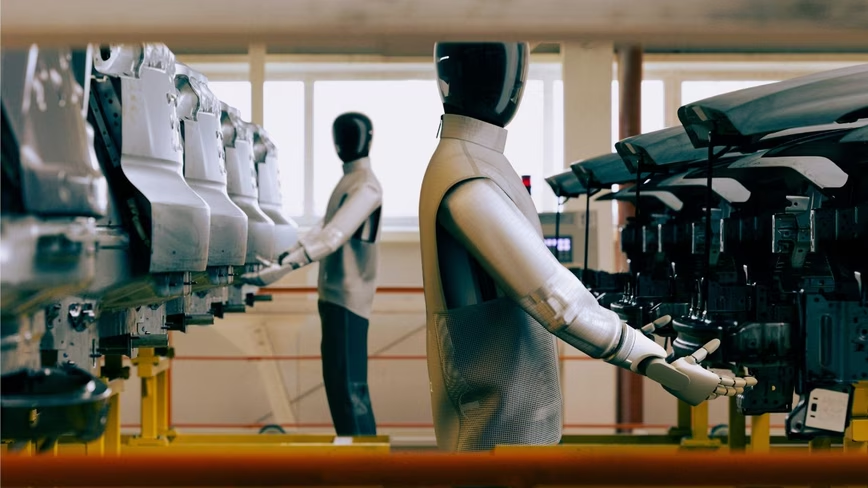

If you’re thinking killer robots duking it out in our cities while civilians run screaming for shelter, you’re not wrong – but robots as a proxy for soldiers isn’t humanity’s biggest concern when it comes to AI warfare. This paper discusses what happens after we reach the point at which it becomes obvious humans are holding machines back in warfare.

According to the researchers, the problem isn’t one we can frame as good and evil. Sure it’s easy to say we shouldn’t allow robots to murder humans with autonomy, but that’s not how the decision-making process of the future is going to work.

The researchers describe it as a slippery slope:

If AI systems are effective, pressure to increase the level of assistance to the warfighter would be inevitable. Continued success would mean gradually pushing the human out of the loop, first to a supervisory role and then finally to the role of a “killswitch operator” monitoring an always-on LAWS.

LAWS, or lethal autonomous weapons systems, will almost immediately scale beyond humans’ ability to work with computers and machines — and probably sooner than most people think. Hand-to-hand combat between machines, for example, will be entirely autonomous by necessity:

Over time, as AI becomes more capable of reflective and integrative thinking, the human component will have to be eliminated altogether as the speed and dimensionality become incomprehensible, even accounting for cognitive assistance.

And, eventually, the tactics and responsiveness required to trade blows with AI will be beyond the ken of humans altogether:

Given a battlespace so overwhelming that humans cannot manually engage with the system, the human role will be limited to post-hoc forensic analysis, once hostilities have ceased, or treaties have been signed.

If this sounds a bit grim, it’s because it is. As Import AI’s Jack Clark points out, “This is a quick paper that lays out the concerns of AI+War from a community we don’t frequently hear from: people that work as direct suppliers of government technology.”

It might be in everyone’s best interest to pay careful attention to how both academics and the government continue to frame the problem going forward.

While the researchers mainly appear to argue that AI will lead us to ruin, they make quite a compelling case for any warmongers seeking evidence to support the quest for superior firepower. At one point the paper points out that “determining how to thwart, for example, a terrorist organization turning a facial recognition model into a targeting system for exploding drones is certainly a prudent move.”

In this light, it’s a bittersweet conclusion for the researchers to ultimately claim the “better option” to continuing the arms race “may be to support regulation or prohibition.” What’s plan B?

H/t: Jack Clark, Import AI

Get the TNW newsletter

Get the most important tech news in your inbox each week.