The Central Intelligence Agency is America’s best-known intelligence agency, but it’s still shrouded in secrecy. Thanks to Wikileaks, we’ve learned a lot about its internal workings, particularly when it comes to cyber-espionage. One thing that I’ve come to appreciate is the humorous bent to how the CIA names its internal projects.

There are some absolute howlers. My favorite is, of course, Gaping hole of DOOM. Other honorable mentions include Munge Payload, McNugget, RoidRage, and Philosoraptor. If you’re curious, TechCrunch has published a near-exhaustive list of them.

Pretty imaginative stuff. This got me thinking, neural networks are great at naming things – from guinea pigs and colors, to metal bands and Pokemon. Is it possible to train one to generate believable CIA malware codenames?

Well, I’ll let you be the judge of that.

Operation Credible Dubbing begins

Neural networks are fucking rad. They are essentially programs that mimic how the human brain works (hence the ‘neural’ bit). This means they’re able to learn and act from an input, rather than a programmer manually defining their behavior.

After a bit of research, I came across an interesting library called char-rnn, which was written by Andrej Karpathy, Director of AI at Tesla. This is written with Lua, and uses the Torch machine learning libary. It essentially lets you provide the system with an input file, and it’ll create text that it thinks looks like the original training data.

I’m not doing this justice. If you’re curious, you can read more about it on the project’s Github page, or in Karpathy’s blog post.

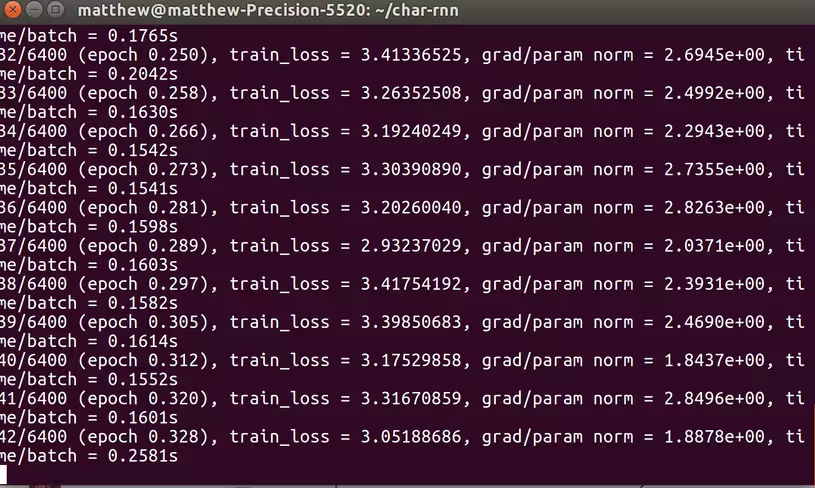

Anyway, I went about setting up my laptop — a pretty beefy Dell Precision 5520 running Ubuntu — with the tools required to use it. This was pretty straightforward, but I’ll discuss it in a bit more depth towards the end of this article.

First thing’s first, I needed to build my collection of training data. As a rule of thumb, the more training data you have, the better the neural network will be at generating similar content.

So, I spent an hour traversing the Internet, looking for CIA and NSA malware codenames. This task was made immensely easier thanks to the aforementioned TechCrunch list. I found others on Wikipedia.

My first few tests were a little bit janky.

- Briie Dunn

- Paceer

- Betle

- HeeryMaomees

- Wheninnnon

- MaecVtoirysirhra HerRAttelr (I actually think this is Welsh)

I was able to improve the quality of outputted text by lowering the batch size (which “specifies how many streams of data are processed in parallel at one time”) to five, and then eventually one.

I also increased the sequence length (which “specifies the length of each stream, which is also the limit at which the gradients can propagate backwards in time”) to thirty, and then fifty.

These steps improved the quality of the outputted text significantly, but it still wasn’t good enough.

- Mubble

- Harper

- Painn

- Commy

- Buble

- Murkett

- CenCore

- This Pane

At that point, Alejandro Tauber, TNW’s Editor in Chief, suggested that I ‘pad’ out the input text with ‘cool words.’

Cool words? Well, yes. There’s a site which lists the ‘coolest words’ in alphabetical order. I’m not sure how one determines the coolness of a word, but admittedly, some of these sound like convincing CIA malware codenames.

Aeon. Amethyst. Equinox. Glabella.

Yes, it’s cheating, but fuck it. The Internet is my playground.

This approach actually produced some pretty decent results, and some sound like genuine CIA black projects:

- Obside

- Nugaty

- Parasdon

- Meninges (This one’s actually a word, and means “the three membranes that line the skull and vertebral canal and enclose the brain and spinal cord.” TIL.)

- Bradicash

- Naminnious

- Gingornious

Technical Notes

This is hardly the most dignified use of a neural network, or indeed, my time. But was it fun? Absolutely.

If you want to play around with char-rnn, great! The library is relatively straightforward, and comes with some really comprehensive documentation (which is a rarity).

There are a few things that I’d like to point out. Firstly, there are some dependencies you’ll need beforehand. These are libreadline-dev, cmake, curl, and git.

Once you’ve installed Torch (which is the main dependency of char-rnn) make sure you update it. Failing to do so breaks the install for one of the other char-rnn dependencies – although I forget which one. To do this, enter the Torch directory in your home folder, and run update.sh.

If you have an Nvidia graphics card, and you intend to speed up your neural networks by running them on the GPU (and if you do, you should, particularly if you’re training on large datasets), you’ll need to download and install the CUDA Toolkit.

At nearly 2GB, this is a bloody big file. It took me the best part of an afternoon to download and set-up.

Some dependencies required to use char-rnn with your NVIDIA CUDA GPU have to be compiled natively for your system. As such, don’t be surprised if it takes a while.

I installed it on a brand-new Dell Precision with an 8-core Xeon CPU and a ridiculous 32GB of RAM, and it still took me an excruciatingly long time. That’s how big it is. If you’re running it on a less powerful computer, don’t be surprised if it takes several hours.

But these are just details, and they shouldn’t dissuade you from playing around with neural networks. Cool projects like this have lowered the barrier-to-entry to the point where talentless dickheads like myself can play with them.

The question is, what will you do with them?

Get the TNW newsletter

Get the most important tech news in your inbox each week.