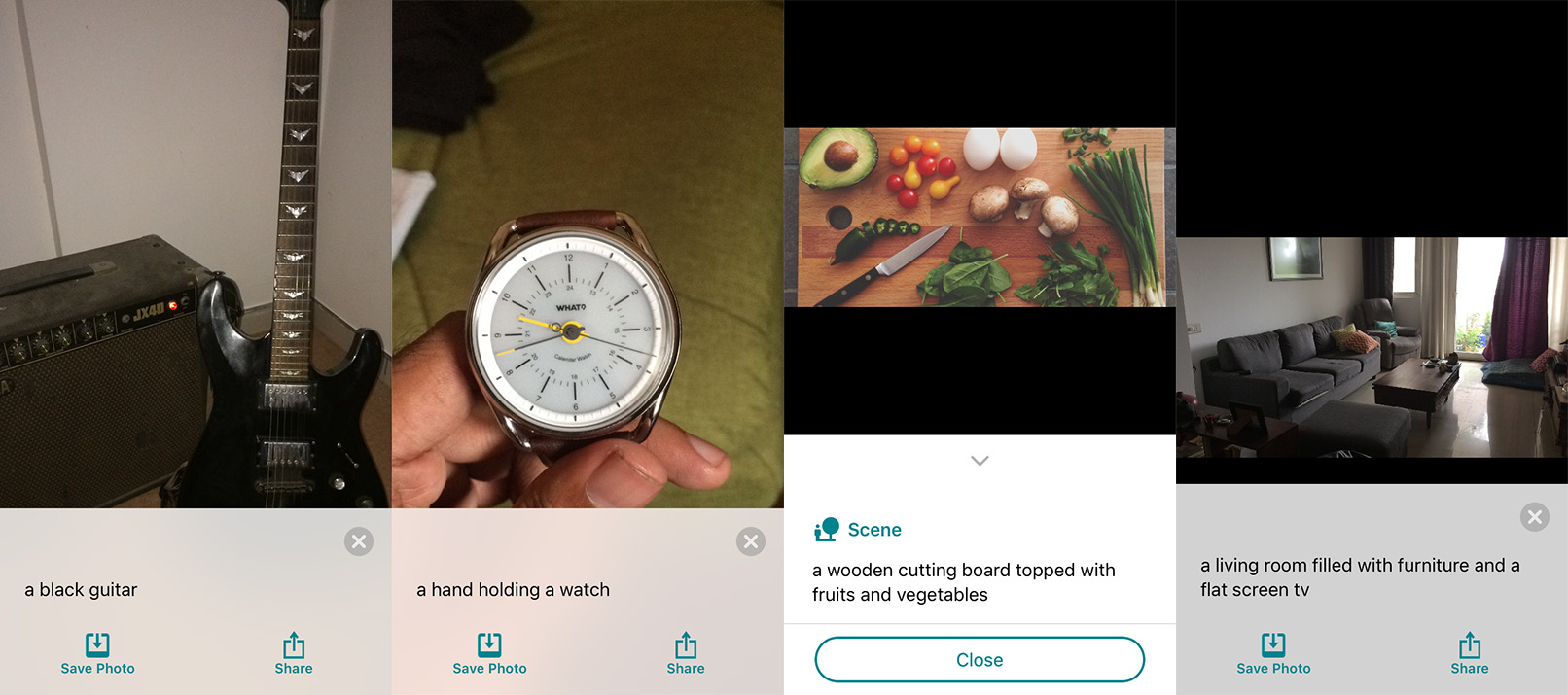

Microsoft hast just launched Seeing AI, an iOS app that it appropriately describes as a ‘talking camera for the blind’; I’ve been giving it a whirl this morning and it’s actually pretty impressive.

Fire up the free app and point your iPhone at anything, whether it’s a document, a menu card, a room or even a friend, and Seeing AI will tell you what it is with its voice.

I tried it on a bunch of objects and spaces, and the app was astonishingly quick and accurate for the most part. It managed to recognize a guitar, identified me by my face and told me just how far away I was, and even described my living room and shower with some basic details.

It was also able to read out the blurb of a book and the ingredients on a ramen packet, and even identified the contents of a photo I sent to the app from the share sheet in Twitter. Seeing AI is also supposed to be able to identify currency notes and products by barcode, but these features weren’t available on my iPhone 5s in India.

Microsoft hopes that this will make life a little bit easier for those with visual impairments. It was borne out of a project at Microsoft Research, and is one of several new initiatives driven by the company’s interest in exploring the possibilities that artificial intelligence can open up.

While I’m fortunate enough to have passable vision and can’t fathom what it must be like to live with a serious impairment, I imagine that Seeing AI, and similar tools like it in the future, could certainly come in handy for assisting with basic navigation around spaces and parsing documents that aren’t optimized for easy reading. What’s exciting is that technologies like AI and machine learning are enabling the creation of such apps that provide functionality we can start to use in the real world right now.

You can give the app a go right away if you’re in the US, Canada, India, Hong Kong, New Zealand or Singapore with an iPhone 5s/5C or newer; it’ll become available in more countries soon.

Get the TNW newsletter

Get the most important tech news in your inbox each week.