Adobe is prepping a brand new iOS retouching app code-named Project Rigel (rhymes with Nigel), and last week the company splashed with a sneak peek online demo outlining some of its features.

Adobe’s Bryan O’Neil Hughes, product manager for digital imaging, has now shared some exclusive information and screen shots about the new app with TNW, and provided some clarifications and amplifications on its operations and the thinking behind it.

“One of the things we’re most excited about is that we can open really large files because everything is done via the GPU,” he said. “The GPU is the wave of the future.”

Hughes emphasized the app is still in the prototype stage and that Adobe has not yet decided on all the features. Nonetheless, certain characteristics have been folded into the app at an early stage, though Hughes says it may be different than people expect.

This app will not mimic Photoshop, as Adobe is going in a different direction with its new crop of artistic mobile apps. “We’re being selective about the things that we choose to port from Photoshop to mobile, plus we’re also coming up with entirely new features and solutions,” he said.

In pulling out parts of Photoshop’s capabilities and packaging them into discrete mobile apps, the company seeks not only to streamline the workflow for Photoshop users but to attract a new audience that has not used Photoshop in the past. “We are excited to bring this to our existing users, but also to open it up to a whole new crowd of people who have heard of this technology and Photoshop, but don’t know how to use it. The intent is to make it as easy to use as possible.”

While Photoshop solves lots of different problems for lots of different people, one thing Adobe realized is that in the mobile sector, the things that people value are speed and success. “They want to do a certain thing and they want to do it quickly.”

SDK development

Choosing what features to include in new apps is made easier by the new Creative SDK Adobe is now using to build its apps. One reason Adobe has been able to develop multiple mobile apps for the iPad is that they share various component parts of the SDK that lets developers pick up and combine pieces of various applications. “Throughout the apps, we can Lego in all sorts of other components around the workflow.”

Adobe Photoshop Mix and many of the new apps released on iOS — Shape and Comp, for example — use a common framework allowing them to open files, pass files to each other and send files to desktop apps.

Android versions

While the Project Rigel retouch app is on iOS first, Hughes says that Adobe, as a company, is platform agnostic and that Android users will not be left in the cold. He says the apps that are ported to Android will be designed as identical to the iOS apps, insofar as is possible — somewhat like Adobe’s traditional approach to Mac and Windows development.

He attributes that partly to the emphasis on GPU processing. “The thing to note is that the GPU code is a lot more portable and its much easier to implement on other devices. We have iOS and Android today, but who knows what the future holds? We don’t want to have to reinvent the wheel every time a new platform comes out.”

Adobe is not offering an Android timetable, however, and Hughes says it is unlikely that the pace of development between the two platforms will be the same.

Show and tell

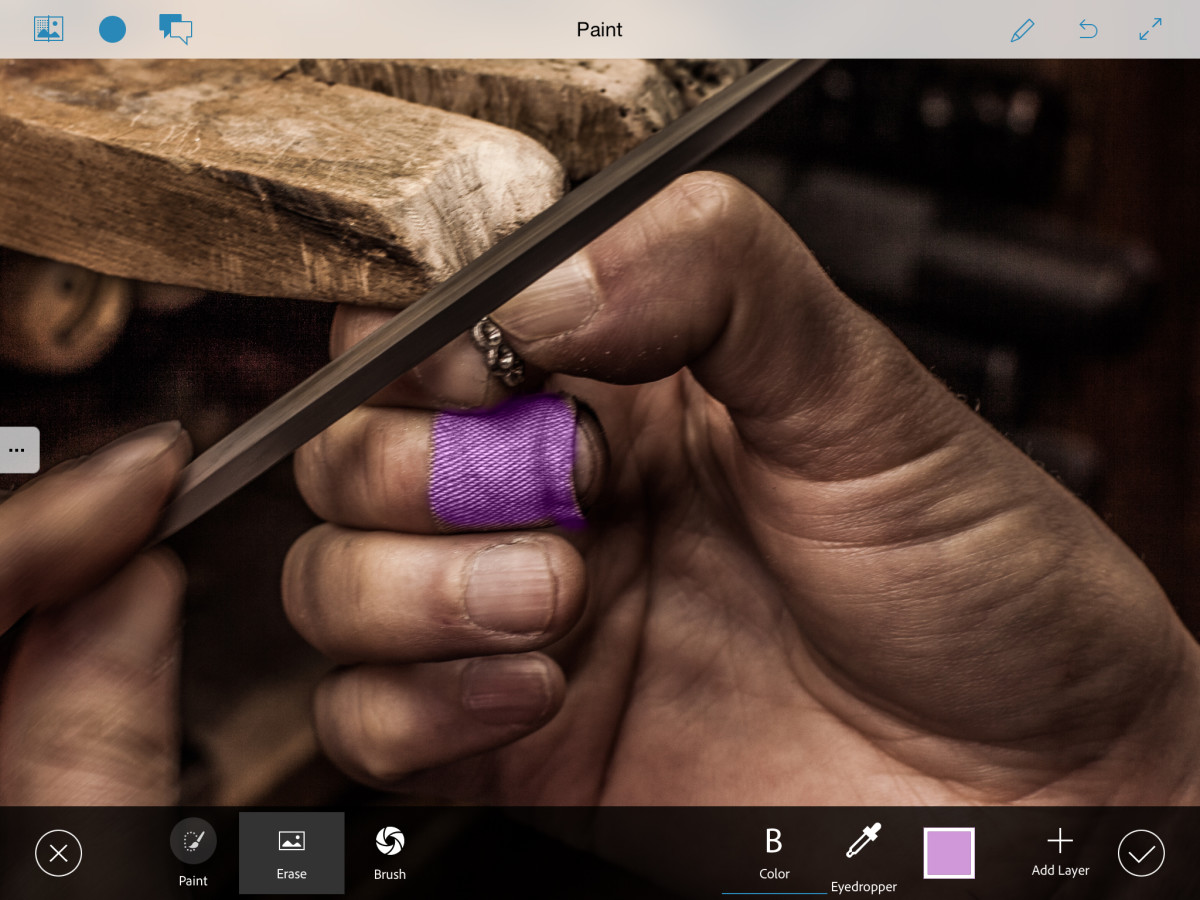

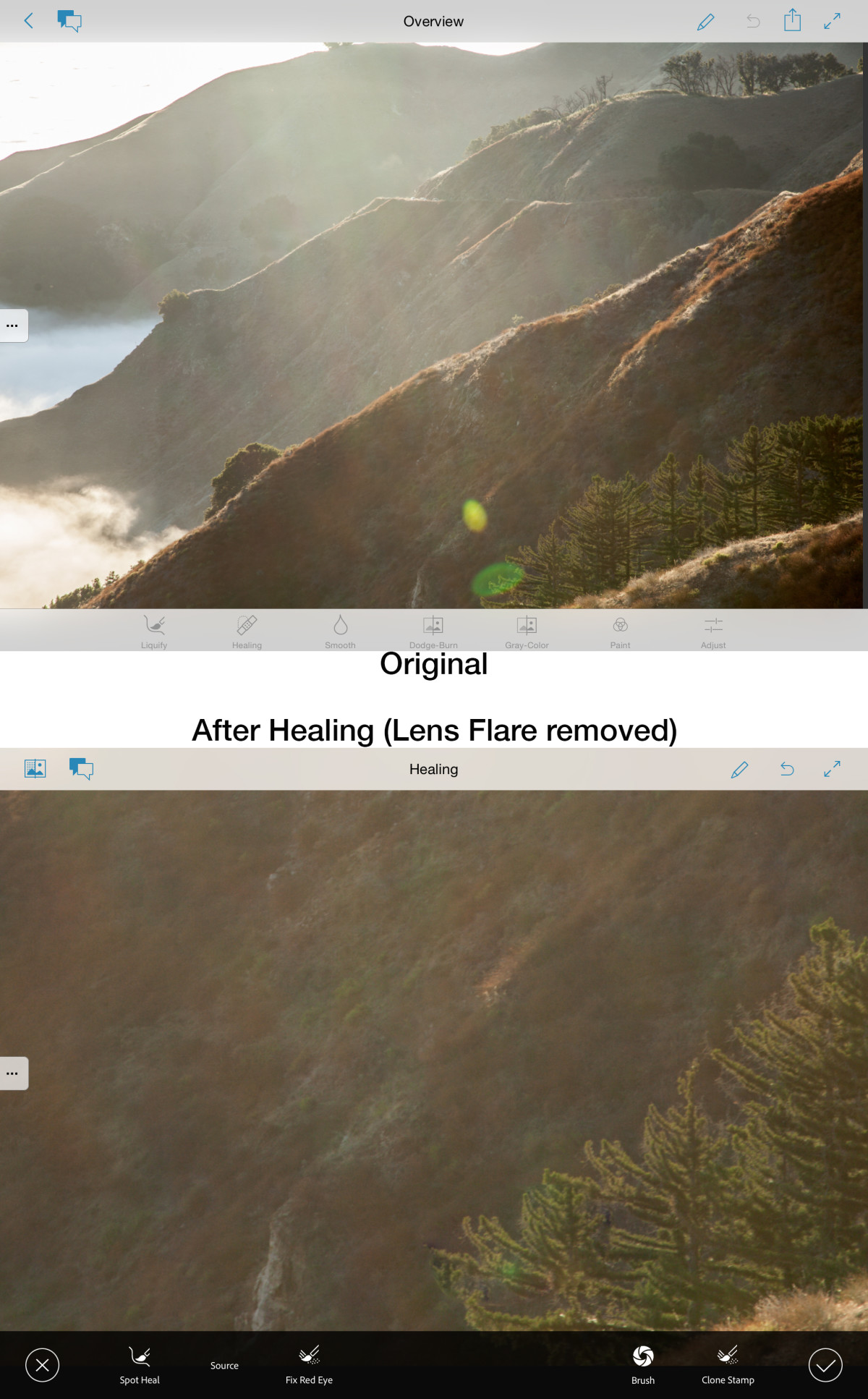

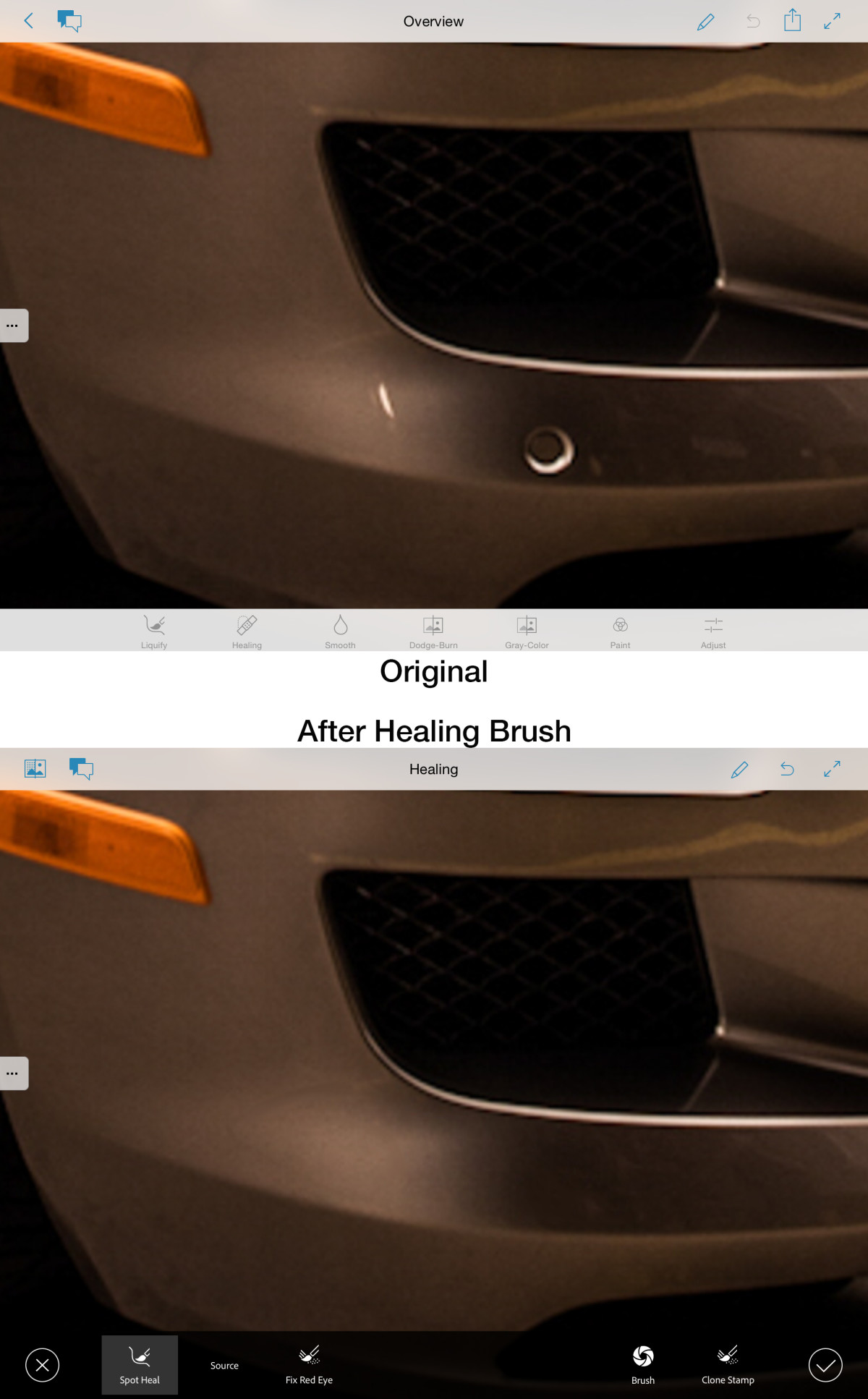

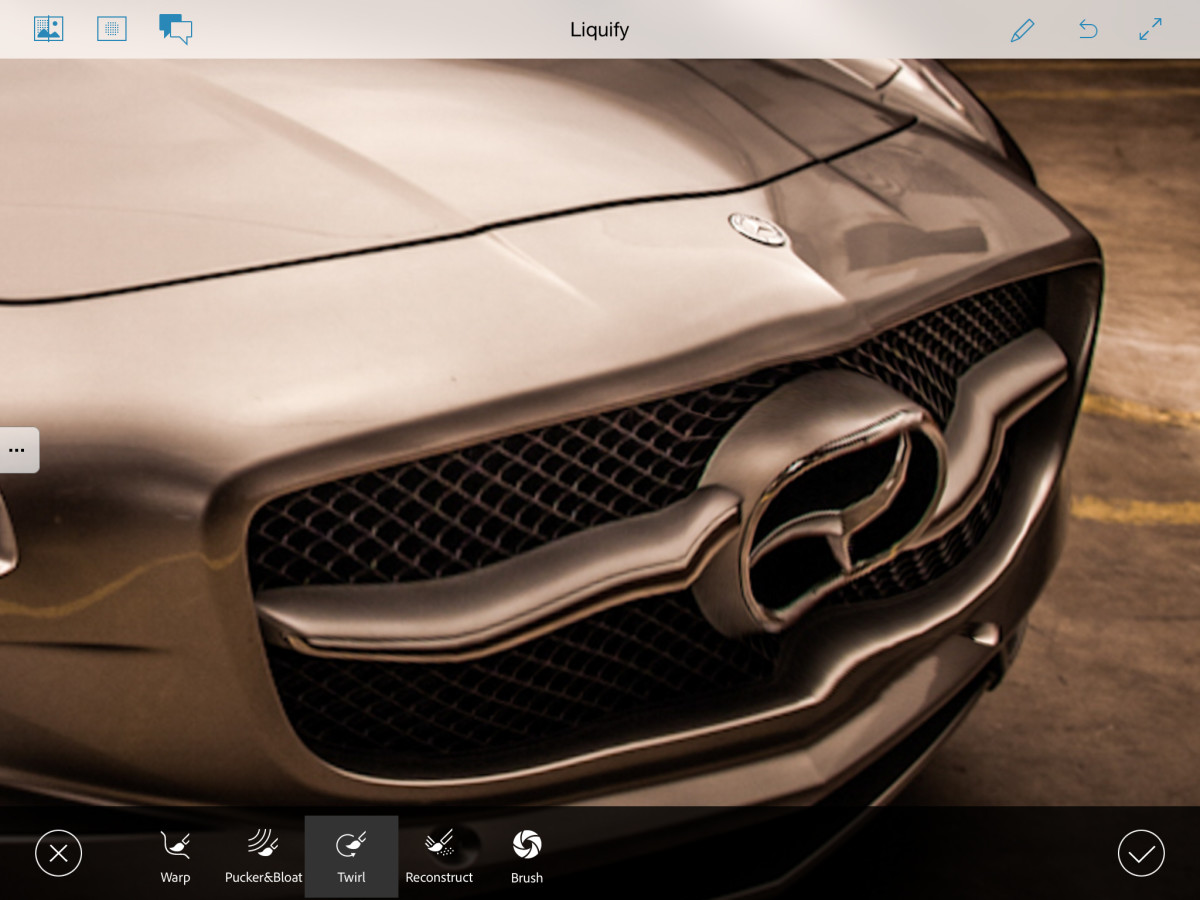

Meantime, here are some never-before-seen screens from the new retouch app prototype, as Hughes walks us through the highlights of Project Rigel.

Once we have an image loaded, the reason we’re able to work with it so quickly is that we’re only processing what you see on-screen. Since we’re working in screen resolution, you can zoom way on or way back, and we’re processing the content depending on what you’re seeing on-screen.

All of the resolution of the file is available and everything we do carries over to that file, but it’s a more elegant implementation of editing. It does get applied to the rest of the image, and you can see there’s a brief moment of processing on-screen, but it’s all done in real time.

If I were to save the image, [the app] would push out that full resolution file with all the changes. Different operations will show a slight latency, but with something like Liquify it does everything in real time.

Like Photoshop Mix, the key to making the behavior so quick and fluid depends on how close you are in the image. The brush dynamics are tied to the zoom, and your brush gets more precise as you get closer to the image.

None of this is done via cloud processing; it’s all done locally. One of the things I heard about Rigel is that it’s so fast we must be using a proxy or editing in the cloud, but we truly are loading that 50 mp image and we truly are editing it. All the effects are done on the full-resolution file and whatever we output is baked into a full-resolution file.

On the desktop, you have very precise input, but on the iPad, you need the software to do a lot more of the work.

In the case of selections, the finger is much clunkier than the mouse and the software needs to do a lot more work. Putting things in a touch environment means speeding them up and inferring a lot about the gestures because there’s not nearly as much precision coming from our fingers.

It’s less like Photoshop in the way it processes because Photoshop has a 25-year-old framework that is powerful and does everything globally. In this case, we’re only working with what you see on-screen, which allows it to be really fast and fluid.

We’re using technology from Photoshop on the desktop, but it’s been optimized for touch, so think of this as a hybrid between content aware fill and the Patch tool. These are not the Liquify, Healing, Dodge or Burn tools as you know them on the desktop; they are the latest, greatest versions from our lab working on full resolution files.

How do we do Liquify non-destructively? We have a Reconstruct brush — it’s not the traditional layers-based way of doing that. A brush slider lets me adjust the size of the brush, and that’s wired to the zoom.

With the Liquify tool, you may get a brief progress bar. Everything happens in real time, though every once in a while you get ahead of it. It’s faster because there’s no source points — you’re just touching on the image wherever you want to apply the effect to it. It’s like the Spot Healing brush, but I can also define a source point — a hybrid between the Spot Healing brush and the Patch tool.

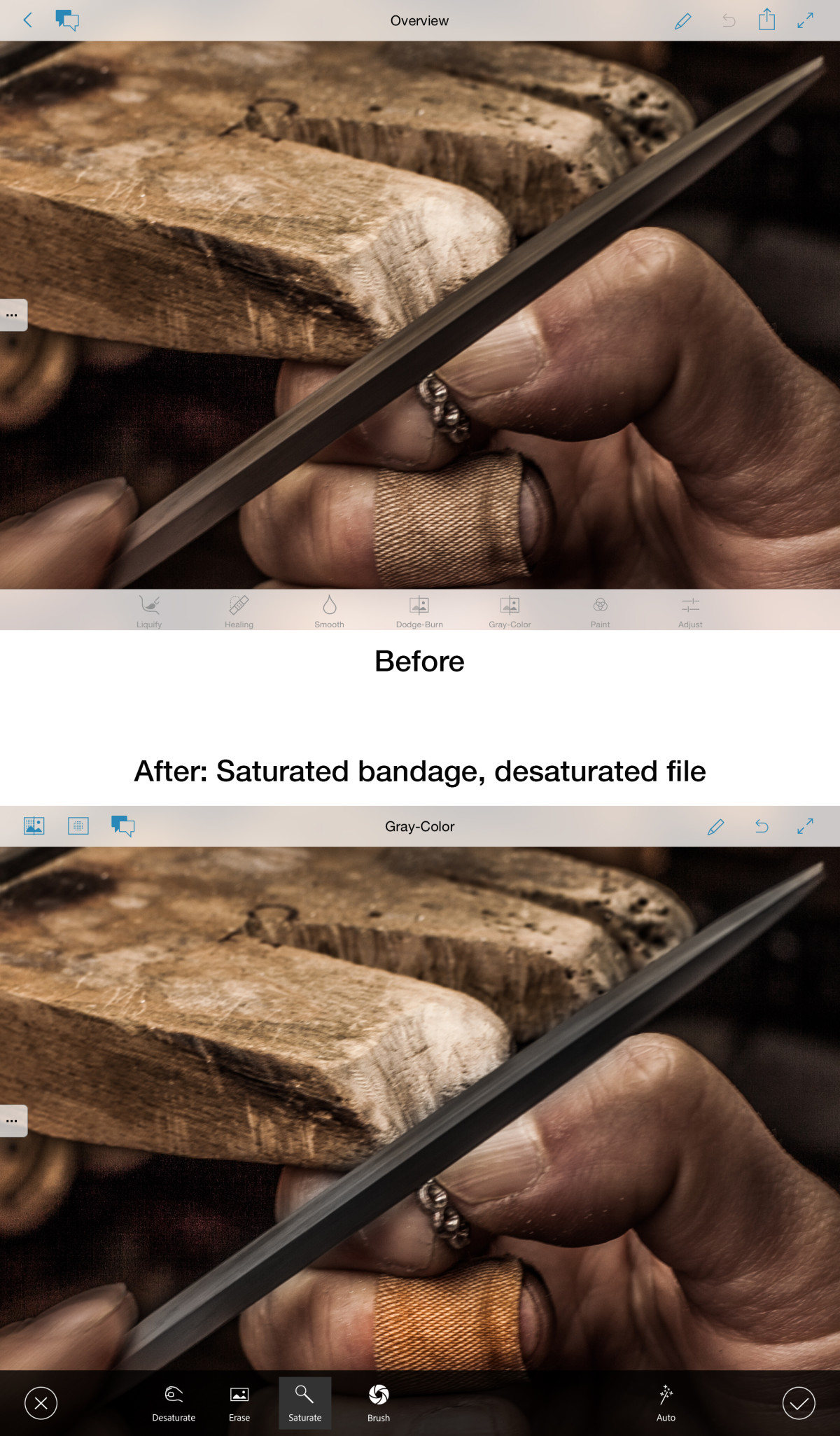

We can localize Photoshop saturation and desaturation. Over the last couple of years we’ve overhauled Dodge, Burn and Saturate and gave them a new logic that helped to preserve detail. We can dodge the deepest, darkest part of the image without introducing any noise or artifacts. The more you scrub with your finger the stronger the effect will look. It’s subtle and not always evident as you’re doing it, but in the before-and-after comparison, it really shows up.

The way saturate or desaturate works here is that it’s using an algorithm similar to vibrance in Lightroom or Camera Raw and the Sponge tool in Photoshop. It’s a gradual application of the effect to make it pop or take it down slowly to no color at all.

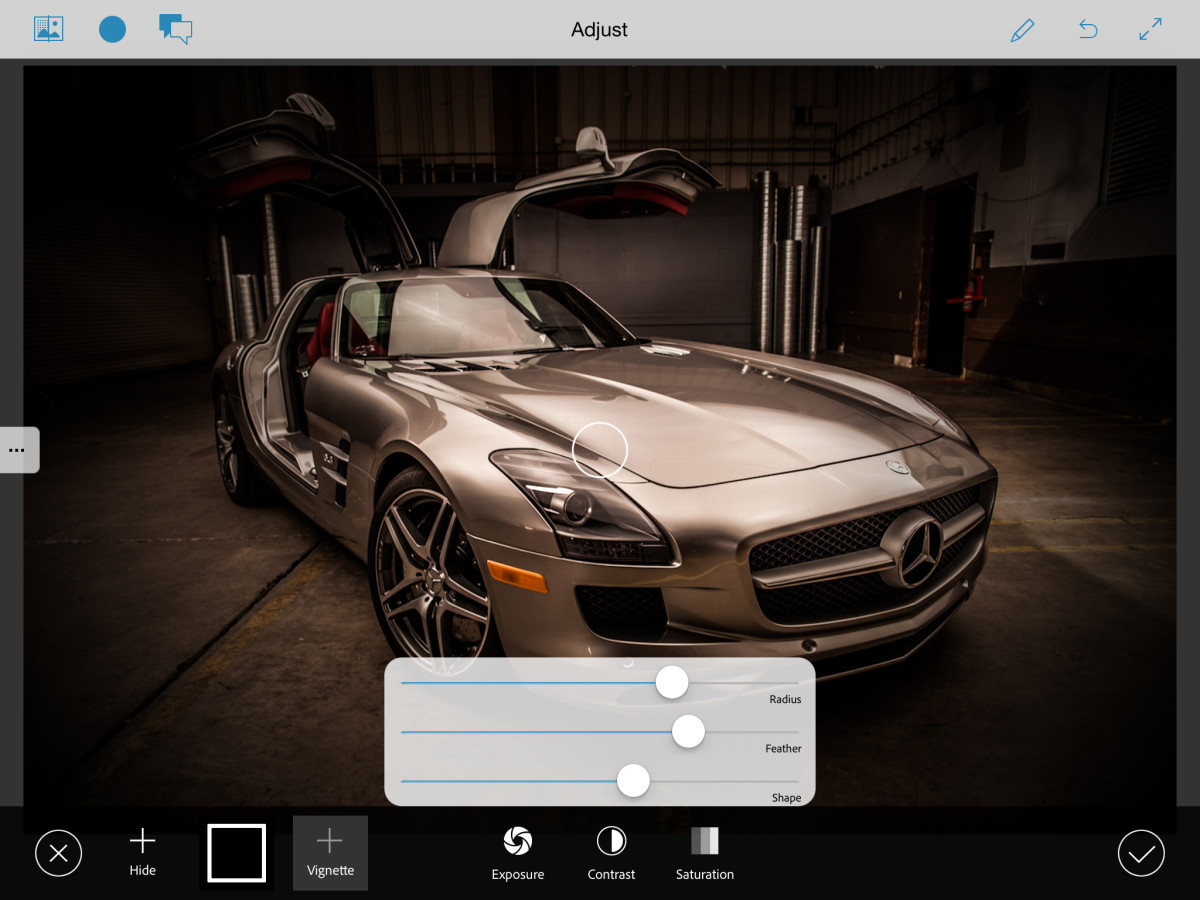

With Vignette, it’s not just a global vignette applied to the whole image — there’s a pin where I can place it off center.

Project Rigel is due out later this year, and Adobe said it will be available for free on the App Store. Users will need only an Adobe ID to log in and save their work. No cloud subscription, or any other connection to Adobe, is required.

Read next: Here’s how a 353-gigapixel panorama is made

Get the TNW newsletter

Get the most important tech news in your inbox each week.