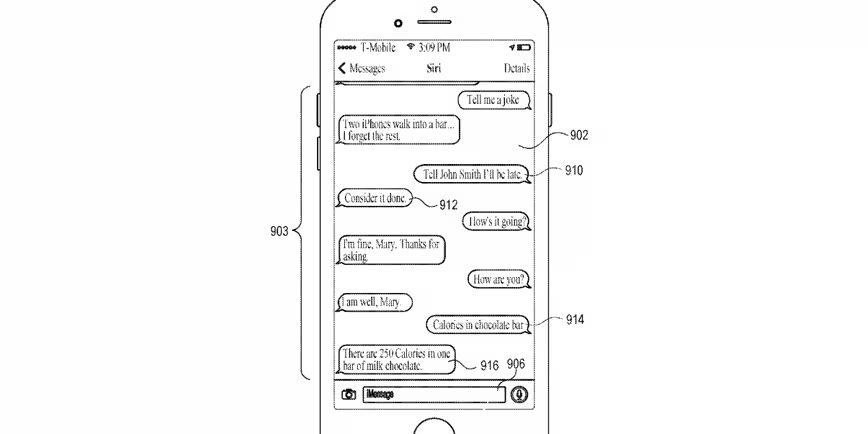

Apple is working on a way for you to control Siri in iMessage, rather than with your voice. The company has applied for a patent that lets you use the digital assistant without having to talk to it.

Here’s how Apple describes it in the patent:

User input can be received and in response to receiving the user input, the user input can be displayed as a first message in the GUI. A contextual state of the electronic device corresponding to the displayed user input can be stored. The process can cause an action to be performed in accordance with a user intent derived from the user input. A response based on the action can be displayed as a second message in the GUI.

In case you find that a little hard to understand (I know I did), this means the digital assistant (Siri), will perform an action in response to your input in a text conversation, then respond to the text.

The most obvious use for this is environments where talking to Siri aloud is impractical — a very loud environment where Siri can’t hear you, like a sporting event; or a very quiet one where you can’t speak, such as a library (though definitely not a movie theater, you heathen).

This might also be helpful for hearing-impaired users or those with speech impediments who might have trouble interacting with the audio-focused assistant.

Get the TNW newsletter

Get the most important tech news in your inbox each week.