In a span of two and a half hours, Microsoft packed a lot into the opening keynote of its Build 2016 conference. But it was the last video shown that seem to have the biggest impact on many of the viewers at home: the introduction of an AI that helps one of its blind developers “see.”

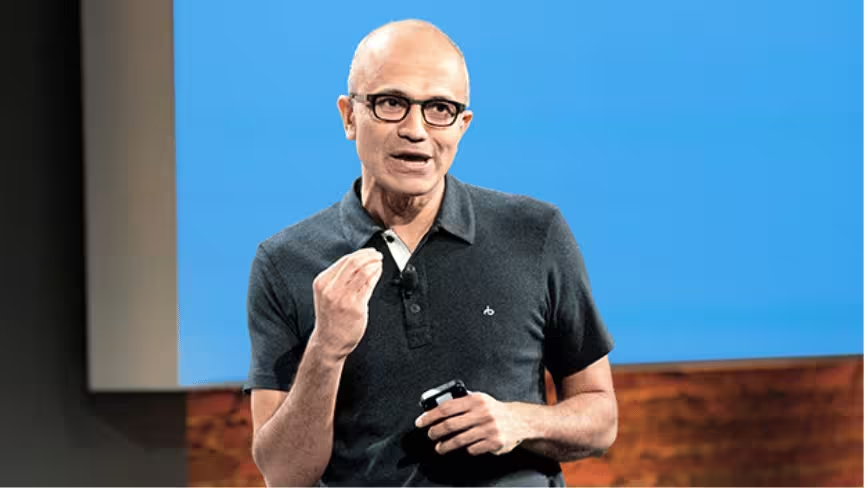

At the very end of its keynote, Microsoft CEO Satya Nadella reiterated that he wants technology to enhance the ways humans communicate. Though the many chat bots he introduced earlier seems technologically “smart,” the framework behind it still needs the help of developers to continue improving on what has already been built.

The Seeing AI is something that’s already been put to work in real life to help a Microsoft developer in the UK navigate life using just a pair of special glasses and his phone.

In a video, Saqib Shaikh is seen using smart glasses and Microsoft’s intelligence AI to take photos and help him recognize what he’s “looking” at, such as his colleagues’ faces and their emotions (based off Microsoft’s facial recognition tool.)

Another example of how the AI helps Shaikh is at a restaurant where a braille menu is not available. Instead, he uses his smartphone with the voice direction of his AI to snap a full photo of the menu so it can read to him some of the items available for order.

The short video didn’t get nearly as much stage time at Build as it should – this was the one prime example where technology can and has truly impacted the way humans live. Let’s face it: Skyping a bot to order pizza is rather trivial. Still, you can’t blame Microsoft for spending nearly 20 minutes on the subject – after all, it is a developer conference.

Coming off of President Obama’s keynote at SXSW, it actually would be nice to start seeing more developers use technology for the greater good than just create more ways to spend money from our phones.

Don’t miss: Everything Microsoft announced at Build 2016: Day 1

Get the TNW newsletter

Get the most important tech news in your inbox each week.