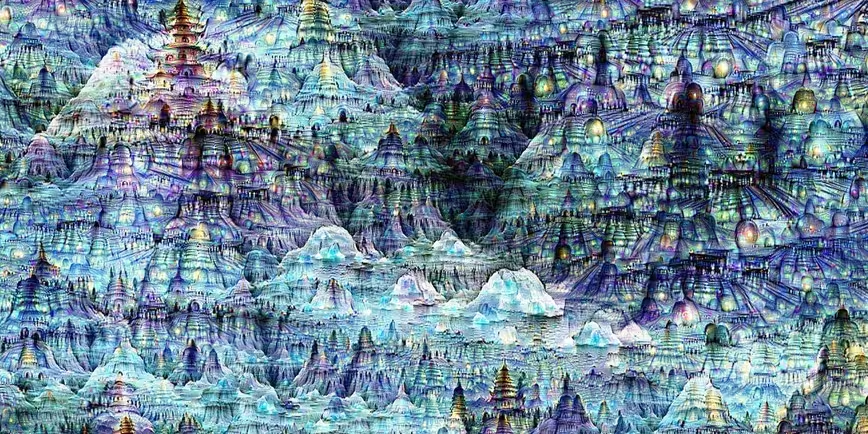

A few months ago, Google demonstrated a visualization tool that shows how its AI that mimics neural networks identifies objects in images. Applying it to photos and videos makes for some interesting effects that we’ve previously seen in video clips and our own snapshots.

Now, you can see how such computing systems can be used to make art at a new exhibition in San Francisco.

The Gray Area Foundation for the Arts has partnered with Google Research to showcase 29 pieces created by creatives and Google engineers using neural networks including the company’s DeepDream algorithm, as well as one that applies an artist’s style onto another’s artwork.

The exhibition opens today at the Gray Area Art & Technology Theater in San Francisco. All the pieces will be available in an auction, with proceeds going towards the nonprofit’s efforts to bring art and tech together.

➤ DeepDream: The art of neural networks [Gray Area Foundation for the Arts via Gizmodo]

Get the TNW newsletter

Get the most important tech news in your inbox each week.