The idea is seemingly simple: There are 13 servers that control the domain name services around the world. If you manage to take out all 13 of them, you effectively blackout the Internet. That’s what OpGlobalBlackout, an initiative from Anonymous, would like to attempt. But just how realistic is the threat? I was curious, so it was time to ask the experts.

First off, it’s worth noting that #OpGlobalBlackout is initially attributed to an idea to take down Sony’s PlayStation Network, Facebook, the UN and others in response to the closing of Megaupload. But then it evolved. What’s not known is whether the evolution is an elaborate troll, or a real idea. But let’s assume, for the sake of argument and investigation, that the threat is real.

Let’s go back in time a few days, to a message posted on PasteBin. It explained, in detail, the method by which Anonymous (or at least one member who wrote the message) wished to implement, and the effect it would have:

“The principle is simple; a flaw that uses forged UDP packets is to be used to trigger a rush of DNS queries all redirected and reflected to those 13 IPs. The flaw is as follow; since the UDP protocol allows it, we can change the source IP of the sender to our target, thus spoofing the source of the DNS query.”

But something about the plans just didn’t seem solid to me. It seemed, for lack of a better word, too simple. We all know that there can be drastic consequences brought on by simple measures in many instances, but we’re talking about a system that is attacked regularly, and massively. It really couldn’t be this easy, could it?

For that answer, I turned to some experts. I first sent an email over to Matthew Prince of CloudFlare. Even if he wasn’t the right guy, he’d know the right guy. And indeed he did. One of CloudFlare’s employees, David Conrad, formerly served as ICANN’s VP of IT and Research. In one notable moment of his career, he oversaw the signing of the DNS root. That is to say, he’d be a guy with the answers.

A Series of Tubes?

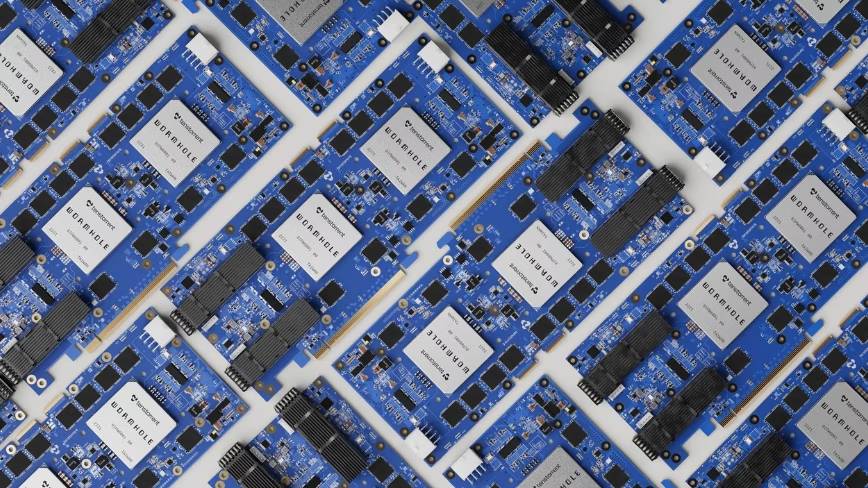

The first point that Conrad brings up to me is this map:

This is a representation of the 13 servers, and all of their various instances, spread around the globe. That is to say that, for every one of the IP address, there are potentially hundreds of different servers that send traffic back to it. This immediately ups the difficulty of what Anonymous is trying to do.

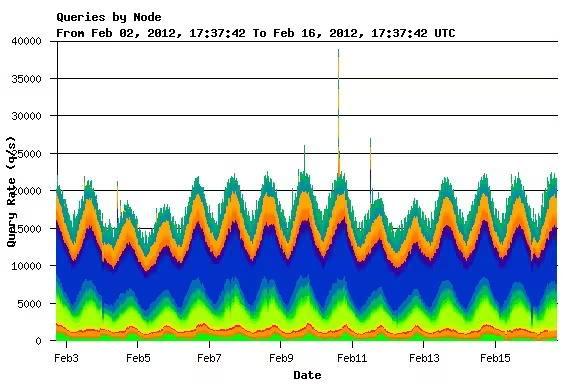

The next point that Conrad makes is that the servers are almost always under attack, but the system has been built and modified to be resistant to these problems. As an example, here’s a graph from a single root server operator, where you can see a spike up to nearly 40,000 queries per second, then another attack shortly after. But nobody was the wiser because of the redundancy of the system:

The particular root server in question here, according to Conrad, has roughly 100 machines distributed around the globe. Each of these machines can handle around 100,000 queries per second. That spike to 40,000 amounts to little more than a drop in the bucket, and Conrad reiterates the fact that it’s not the largest root server operation.

Conrad relates a story from earlier days of the Internet, when during a denial of service (DoS) attack, “3 or 4 of the root server IP addresses” for some people were taken offline. During this time, however, “I don’t believe anyone other than the folks who monitor root servers noticed.”

But there are other factors at work here too, and they’re a bit more human. Even if the attack were overall unsuccessful in bringing down the whole of the Internet, something of the scale that Anonymous is planning would almost certainly impact a non-trivial portion of traffic. In doing that, you’re angering the people that you’re not necessarily trying to affect.

The Human Condition

It’s worth understanding that each of the server IPs is then decentralized so that it can accept traffic from different places. As Dave tells me, people need to understand the bottleneck that can happen.

For instance, let’s say that (theoretically) each of the servers could handle 40,000 incoming traffic requests. If, suddenly, 100,000 requests were being sent to a server, 60,000 of those are simply dying due to timeouts, thus they’re not effective in the grand scale. There is a bottleneck to that upstream server, and it can not handle the amount of traffic that the server itself can.

For instance, let’s say that (theoretically) each of the servers could handle 40,000 incoming traffic requests. If, suddenly, 100,000 requests were being sent to a server, 60,000 of those are simply dying due to timeouts, thus they’re not effective in the grand scale. There is a bottleneck to that upstream server, and it can not handle the amount of traffic that the server itself can.

“We’ve always explored this, in theory. We’ve put things in place, such as Anycast [a system of addressing in which requests are sent to the topologically nearest node of a group of recievers].”

But it’s not a single Anycast setup. It is, in fact, more along the lines of 50 and, “we’d like to have 100”. Now, multiply that by the 12 other root nameservers, and the multiples of each of those. You’ll start to see why it would take an inordinately huge, organized attack to even make a dent in the system.

But therein lies the exact problem. There’s only so much that can be accounted for, and there’s no way to get to the “leader” of Anonymous to stop an attack. So how much firepower does the group have, and is it able to muster itself in order to effectively accomplish its goal?

The other massive hurdle here is that Anonymous is (largely) using the Internet in order to organize its actions. If an attack on this scale were to be successful, the group then loses its best source of that organization. It’s a bit like the old cartoons where the guy is up in the tree, cutting off a limb, while sitting on the end that will fall.

As is common with any viable threat to the Internet at large, due diligence has to be paid. No system is completely removed from the threat of a massive denial of service attack, but our DNS has been built with that very realization in mind. It’s with that knowledge that precautions have been taken, redundancy has been put in place, and Anonymous’ mission would be incredibly hard to accomplish.

What’s your take? Does Anon have the Internet muscle to take down the whole of the Internet? Even if it could, should it happen? Or is it all just a brilliant disguise for an otherwise-dead mission?

Get the TNW newsletter

Get the most important tech news in your inbox each week.