When it comes to robots replacing humans, we might think we have the upper hand since we’re the ones who build and program them but that’s not neccesarily the case anymore.

Google is taking a different approach to training its robots – it’s letting them teach each other.

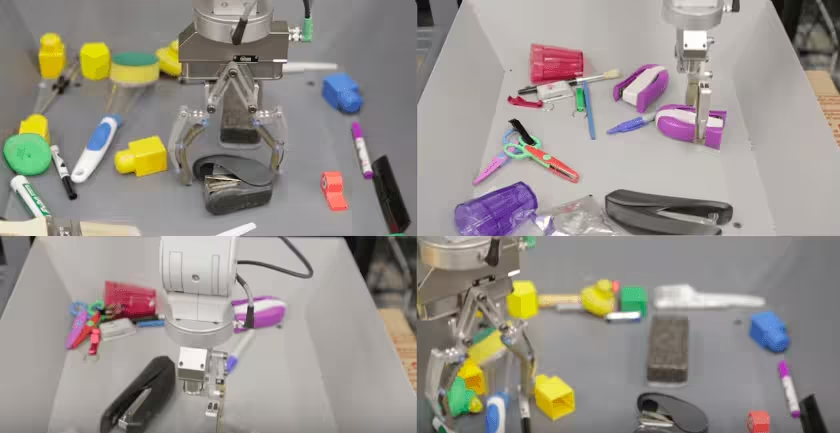

Researchers at Google have released a report showing how they connected 14 robotic arms together and used convolutional neural networks to let them teach themselves how to pick things up.

The approach mimics how young children learn between the ages of one and four years old, and is essentially helping the robots to develop reliable hand-eye coordination.

Typically, a robot would be programmed to carry out specific tasks, but this method shows how they can learn through trial-and-error in combination with a neural network – the same way a child learns how to do something by watching other people.

The idea is that robots in the future will be able to interact with objects they haven’t encountered before, without having to be pre-programmed, bridging the gap between the sensorimotor skills of them and humans.

The researchers had the robots pick up objects out of boxes every day and after 800,000 attempts, they observed reactive behavior from the arms. They had become better at picking up the objects but also started to adjust their grasps automatically to suit each task, without any external input.

Over time, the robots began to each develop techniques for their tasks and even started to adjust objects on the ground before picking them up to make them easier to grasp. This is all without any additional programming from the researchers.

So if you ever wondered about robots outsmarting humans and taking over the world in a technological singularity type situation, it’s certainly looking more likely now.

Get the TNW newsletter

Get the most important tech news in your inbox each week.