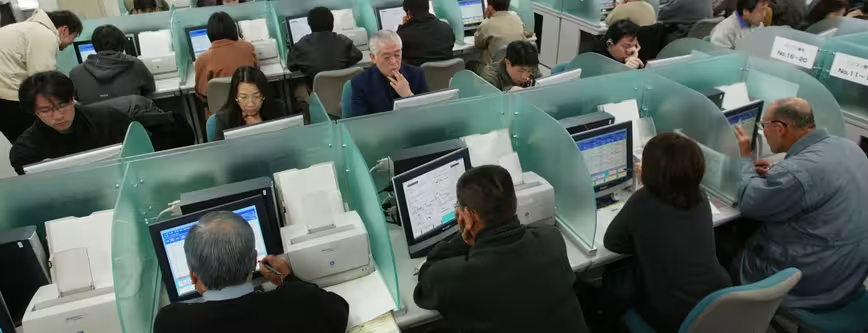

Google uses a team of people to judge the quality and relevancy of webpages that appear in its search engine, it has been revealed.

An article published today by The Register details a 160-page manual, produced by Google, which explains to ‘raters’ how they should judge an individual website based on its relevance, level of spam and quality.

Google would like you to believe that its search results and the hierarchy of those results is dictated almost entirely by super complex computer algorithms. While that may be true, it’s also not the first time it’s been proven that the search giant uses humans to manually sift through some of its search results.

The Register has also revealed that these raters, who are hired through contractors such as Leapforce and Lionbridge, work from home to check the webpages.

On the topic of relevance, Google’s manual advises the raters to give websites one of the following grades, based on their own opinion; “Vital”, “Useful”, “Relevant”, “Slightly Relevant”, “Off-topic or Useless”, and “Unratable”. A spam rating, meanwhile, can consist of either “Not Spam”, “Maybe Spam”, “Spam”, “Porn” or “Malicious”.

By its very nature, asking home workers to rate websites based on the search terms used by a Googler is a subjective method. For example, at one point the manual tells the raters to consider the user’s intent behind an individual search query. It reads: “What was the user trying to accomplish when he typed this query?”

Google categorises search queries in three distinct ways: an “action intent” means that a user wants to accomplish a specific goal, while “do queries” and “go queries” are presumably more question or advice orientated.

Over the years, websites have tried to trick Google’s algorithms into placing them higher in its search results. It’s basically why Search Engine Optimisation (SEO) still exists and continues to be taught to this day. However, it’s encouraging to know that Google is trying to combat this by hiring real people, who are far less easily fooled, to find and remove some of the worst suspects.

How many of these raters are working at any one time is unclear. It’s also hard to know exactly how many websites they are able to check, rate and approve in any given day, month or year. With the number of websites constantly growing, it’s unlikely that this approach will ever be used to check the majority of Google’s search results – although anything is better than nothing, right?

Of course, on the other side of the fence it’s important for Google to be checking the raters too. If someone knows very little about a specific topic, say cross stitch sewing for argument’s sake, they might not realise from the information alone whether a webpage is entirely relevant.

From the outside, this whole initiative looks to be part of a much broader quality assurance strategy that is being implemented by Google. By checking even a handful of search queries with real employees, the results and data that it gets back will give them a much better idea of how their automated algorithm is coping on a daily basis.

Image Credit: Koichi Kamoshida/Getty Images

Get the TNW newsletter

Get the most important tech news in your inbox each week.