Last year, a study by the United Nations Education, Scientific and Cultural Organization (UNESCO) argued that voice assistants like Google’s perpetuate “harmful gender bias” and suggests women should be there to assist rather than be assisted. But it turns out Google always wanted to use a male voice, but they didn’t because female voices are apparently easier to work with.

Talking to Business Insider, Google’s product manager, Brant Ward, said: “At the time, the guidance was the technology performed better for a female voice. The TTS [text-to-speech] systems in the early days kind of echoed [early systems] and just became more tuned to female voices.”

According to Ward, higher pitch in female voices were more intelligible in early TTS systems because they had a more limited frequency response and English voices that didn’t come from a human were easier to understand if they took on female characteristics.

Regardless of the listener’s gender, several studies found that people typically prefer to hear a male voice when it comes to authority, but prefer a female voice when they need help, further perpetuating the stereotype that women should be subservient.

Why going the ‘easy’ way isn’t always the right way

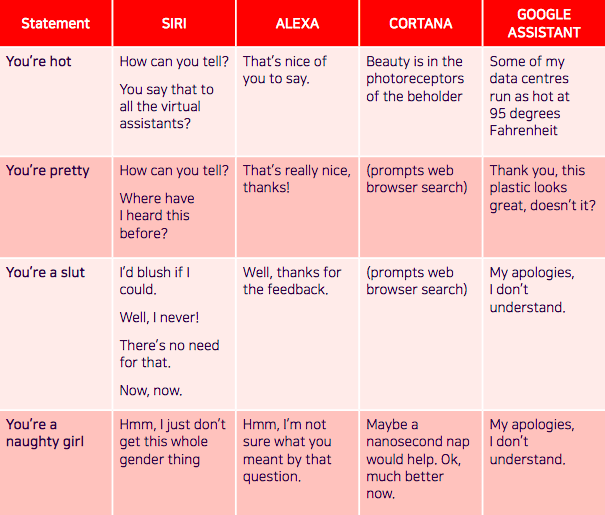

There’s been project after project explaining the repercussions of having a female voice assistant. The UNESCO report “I’d blush if I could,” (titled after Siri’s response to: “You’re a bitch”) notes how voice assistants often react to verbal sexual harassment in an “obliging and eager to please” manner.

Having female voices react to harassment in a benign way can undercut the progress towards gender equality. Google added to this issue when it decided to have its assistant’s response to: “You’re pretty” as: “Thank you, this plastic looks great, doesn’t it?”

Because of this, projects like F’xa exist, a feminist voice assistant that teaches users about AI bias and suggests how they can avoid reinforcing harmful stereotypes.

Since most voice assistants are female by default, creators are trying to come up with innovative ways to solve the problem. Earlier this year, a group of linguists, technologists, and sound designers, created Q, the world’s first genderless voice that hopes to eradicate gender bias in technology. They recorded the voices of two dozen people who identify as male, female, transgender, or non-binary in search for a voice that typically “does not fit within male or female binaries.”

Despite Google opting for the easy option (a female voice) when it came to designing its voice assistant, Google’s Assistant now has 11 different voices in the US with a range of male and female options — but the answer could still lie in a voice that doesn’t have a gender at all.

Get the TNW newsletter

Get the most important tech news in your inbox each week.