If you walk into the booth of any major display manufacturer this year at CES, you’re going to be bombarded by every type of 3D system that you could even think of seeing. From tiny screens to gigantic, wall-filling panels, 3D is everywhere and the brands are trying to push it as the next big thing. But the problem is that it still sucks. A lot. It’s distracting, it’s ugly and dammit it just doesn’t work very well.

Taking the problem one step further, brands don’t seem to understand the TV viewing experience at all. HDMI made hooking up multiple HD sources simple. It was a huge step in the right direction. But then the companies started looking for a leg up, and in doing so have made the experience more difficult than it was when we were still flipping 13 channels via a knob on the front.

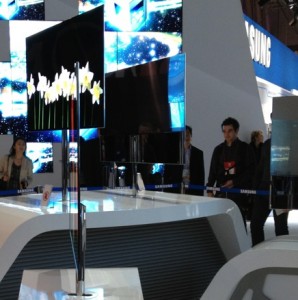

Let’s talk for just a moment about what the companies are doing right. Because it’s important to note that innovation isn’t gone, the technologies just aren’t ready for prime-time. Samsung, for instance, displayed a Super OLED TV that is simply the most gorgeous piece of electronic equipment I’ve ever seen. It’s truly stunning. LG has rolled out tech that allows 2 people to see 2 different images on the same display, but it doesn’t work seamlessly just yet. Unfortunately, these are the only 2 things I saw that were steps in the right direction.

Outside of Samsung’s city-block-sized display area, there’s a gigantic wall of 3D monitors. You walk up, snag some glasses and you are treated to an interesting performance of the technology. But I think TNW’s Matthew Panzarino said it best with his sentiment that 3D is fine for such a display, though it ruins the viewing experience in the living room.

It’s frustrating to me to see screens like the one from Samsung then to walk a few feet and see that they’re ignored in favor of gimmicky, half-working crap. Sitting down to watch a show shouldn’t have to be an ordeal. It’s the simplicity of video entertainment that has made it successful.

It’s frustrating to me to see screens like the one from Samsung then to walk a few feet and see that they’re ignored in favor of gimmicky, half-working crap. Sitting down to watch a show shouldn’t have to be an ordeal. It’s the simplicity of video entertainment that has made it successful.

Anything that makes it more difficult to just enjoy a moving picture on a screen is ultimately headed in the wrong direction.

But at every turn, that’s what we see. TVs with built-in operating systems make huge promises but fail to deliver, you’re forced to “enjoy” lower picture quality and/or goofy glasses with 3D and the list goes on. TNW’s Martin Bryant went into detail about why “smart TVs” aren’t ready for the mainstream just yet, so I’ll direct you to his opinion piece instead of restating it here. The Cliff’s Notes version, however, is that consumers are being duped into buying things that they shouldn’t even have access to just yet.

It’s a problem that we see in technology far too often. In order to try to get ahead, manufacturers grasp at straws of promise. 3D and smart TVs were supposed to be the next big thing, and they very well might turn out to be just that. But for now, both of these are bitter pills that are being shoved deeply into your throat. The market is ready and willing to purchase these devices if they’re done right, but the ecosystem and the technologies are still months or years away from being worthy of your hard-earned cash.

I’m not giving up hope just yet, but I’m sure as heck not giving up my money either. My living room has a 42-inch Philips LCD, an Apple TV and an Xbox 360 with a Kinect. The Kinect system is the closest thing to a working smart TV that I’ve experienced, and I’m placing my bets that Microsoft will actually win this race unless Apple drops its “hobby” for a real solution sometime very soon.

As we wind down our week full of CES coverage, I’m still hopeful that I’ll find a TV technology that simply wows me with reality instead of potential. But if history holds true, we’re a couple of years away from that happening. Until that time comes, do yourself a favor – buy a gorgeous TV, hook up some equipment to it and stop wearing the stupid-looking glasses.

Get the TNW newsletter

Get the most important tech news in your inbox each week.