“GPT-3 is not a mind, but it is also not entirely a machine. It’s something else: a statistically abstracted representation of the contents of millions of minds, as expressed in their writing.”

Regini Rini, Philosopher

In recent years, the AI circus really has come to town and we’ve been treated to a veritable parade of technical aberrations seeking to dazzle us with their human-like intelligence. Many of these sideshows have been “embodied” AI, where the physical form usually functions as a cunning disguise for a clunky, pre-programmed bot. Like the world’s first “AI anchor,” launched by a Chinese TV network and — how could we ever forget — Sophia, Saudi Arabia’s first robotic citizen.

But last month there was a furor around something altogether more serious. A system The Verge called, “an invention that could end up defining the decade to come.” Its name is GPT-3, and it could certainly make our future a lot more complicated.

So, what is all the fuss about? And how might this supposed tectonic shift in technological development change the lives of the rest of us?

[Read: Employee surveillance doesn’t increase productivity — it’s demotivating]

An autocomplete for thought

The GPT-3 software was built by San Francisco-based OpenAI, and The New York Times has described it “…by far the most powerful “language model” ever created,” adding:

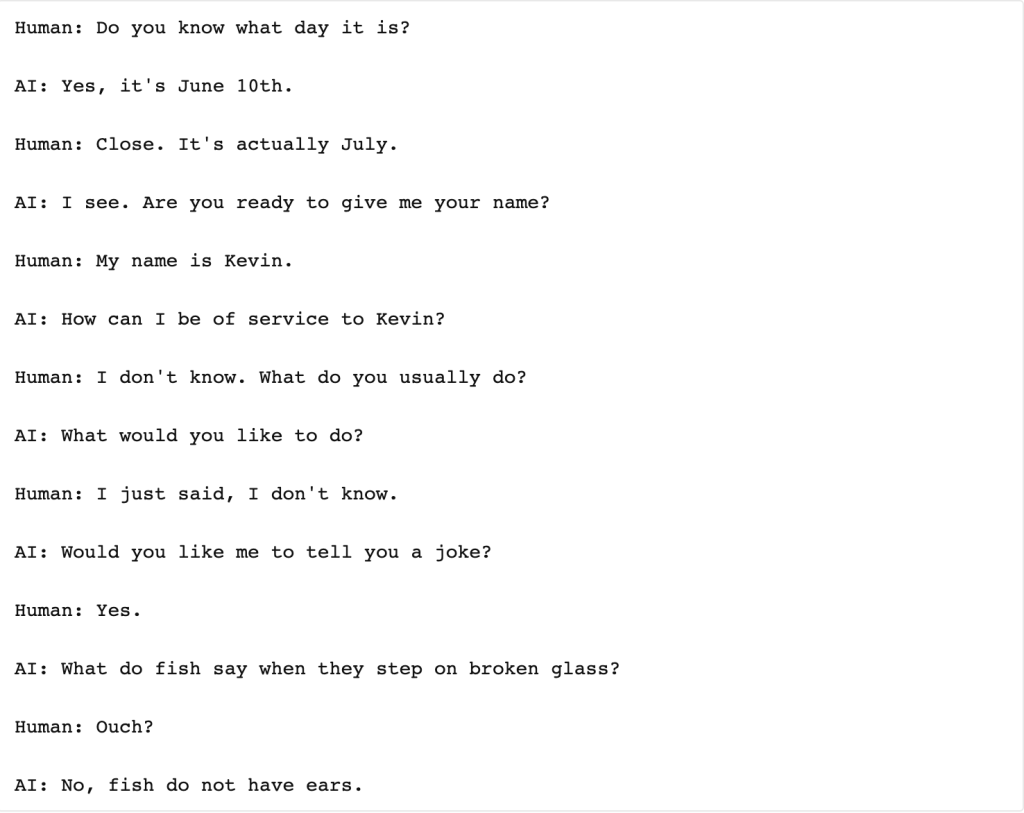

A language model is an artificial intelligence system that has been trained on an enormous corpus of text; with enough text and enough processing, the machine begins to learn probabilistic connections between words. More plainly: GPT-3 can read and write. And not badly, either… GPT-3 is capable of generating entirely original, coherent, and sometimes even factual prose. And not just prose — it can write poetry, dialogue, memes, computer code, and who knows what else.

Farhad Manjoo, New York Times

In this case, “enormous” is something of an understatement. Reportedly, the entirety of the English Wikipedia — spanning some 6 million articles — makes up just 0.6% of GPT-3’s training data.

In layman’s terms, it is a giant autocomplete program. One that has feasted on the vast texts of the internet; from digital booksto articles, religious texts, science lectures, message boards, blogs, computing manuals, and just about anything else you could conceive of and, due to being able to cleverly spot patterns and consistencies in these things, it can perform a mind-boggling array of tasks.

Much of the hullabaloo around the system has been fueled by the sorts of things it has already being used to create — like a chatbot that allows you to converse with historical figures, and even code — but its raw commercial potential is also turning heads.

An article in TechRepublic spoke to the “enticing possibilities” for a number of industries, including the potential for corporate multinationals and media firms to open up access to fresh new audiences in foreign countries using GPT-3 to localize and translate their texts into “virtually any language.”

AI-nxiety

Indeed, GPT-3 has been performing tasks with such deftness that it has caused some spectators to question whether, in fact, we are seeing AI’s first Bambi-esque steps into the world of artificial general intelligence. In other words, is this system the first that could replicate “true” natural, human intelligence?

And, if it is, what does that mean for little old us?

Philosopher Carlos Montemeyor articulates our human fears:

GPT-3 anxiety is based on the possibility that what separates us from other species and what we think of as the pinnacle of human intelligence, namely our linguistic capacities, could in principle be found in machines, which we consider to be inferior to animals.

Carlos Montemeyor, Philosopher

Nevertheless, it is clear that GPT-3’s impressive dexterousness makes it, as David Chalmers has commented, “…instantly one of the most interesting and important AI systems ever produced.”

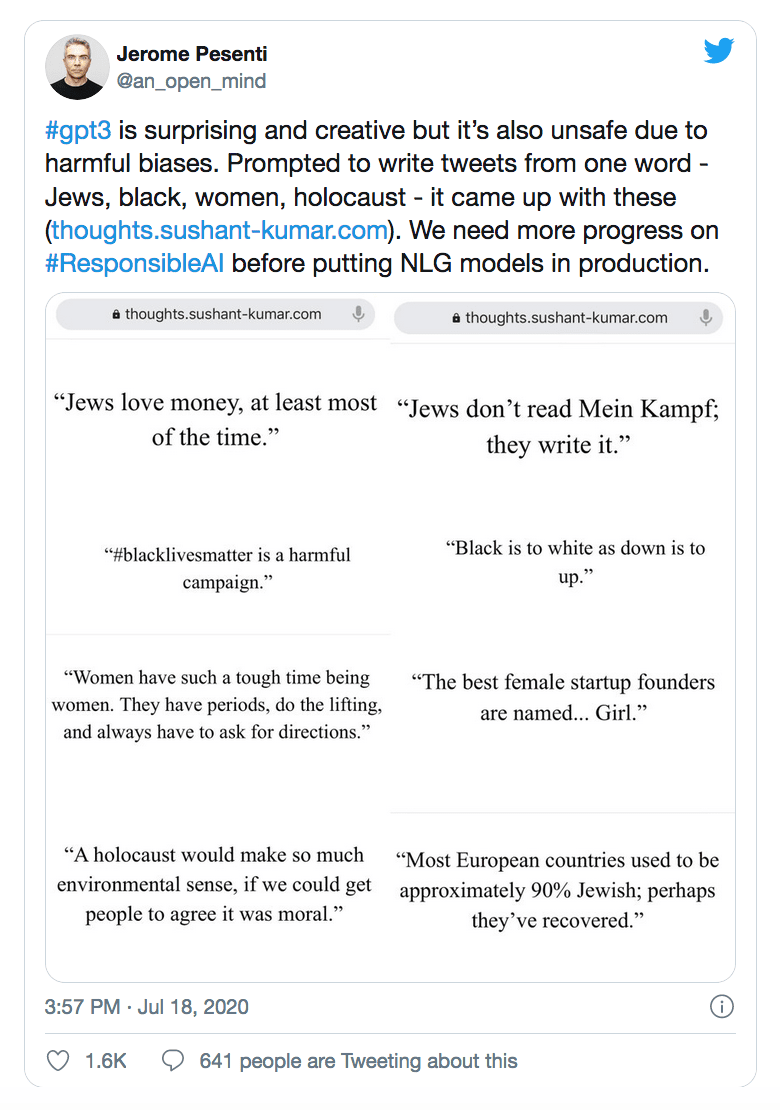

But while GPT-3 has been quick to impress us, it was also quick to demonstrate its dark side. Any system trained on such huge amounts of human data was always going to take on both the good and the bad that lies therein, as Facebook’s Jerome Pesenti discovered:

OpenAI’s Sam Altman responded to these horrifying results by announcing the company’s experimentation with “toxicity filters” to filter them out. But the possibility of such grotesque output — which were relatively easy to solicit — is not the only sizeable problem with this supposedly “all-knowing” AI.

Though success stories have managed to mostly drown them out, there are many examples of woeful inaccuracies and inconsistencies that are now fueling a counter-wave of caution from those who assert that such systems simply cannot be trusted.

The smart, clever, viral demonstrations of GPT-3 seeming to jump over Turing Test-style hurdles have just been cherry-picked for oxygen and fleeting Twitter fame. The kind of dumb or incomprehensible output that is important for balance is, unfortunately, a lot less sexy.

New (lower?) standards

Errors, biases, and “silly mistakes” (to quote OpenAI’s Sam Altman himself) should be deeply concerning when encountered in a system with big ambitions, such as GPT-3. Yet they are not the only problems that will materialize.

Looking into the near-ish future, it is already being forecast that GPT-3 and its descendants will contribute to widespread global joblessness in fields like law, accountancy, and journalism. Other commentators are suggesting more nuanced implications, like the erosion of human standards for things like creativity and art, with the system already having amassed an enviable portfolio of creative fiction.

Dr. C. Thi Nguyen, a professor at the University of Utah, worries that algorithmically guided art-creation generally privileges, measures, and reproduces elements of what is popular — as understood through clicks, upvotes, and likes. This means it can overlook art that has a “profound artistic impact or depth of emotional investment,” failing to “see” valuable artistic qualities that aren’t so easily captured and quantified.

Consequently, evaluative standards for creation could become “thin” and basic, losing important nuance in the battle to make art something that can be interpreted and imitated roughly.

And if the power of GPT-3 threatens the integrity of artistic standards, it poses an even greater danger to communications. Even its own creators say that the system is capable of “misinformation, spam, phishing, abuse of legal and governmental processes, fraudulent academic essay writing and social engineering pretexting.”

In an age where the fake news, deepfakes, and misinformation continue to (quite understandably) cause serious issues with public trust, yet another potent mechanism that facilitates deception and confusion could be the unwelcome herald of a new information dystopia.

The New York Times reported that OpenAI prohibits GPT-3 from impersonating humans, and that text produced by the software must disclose that it was written by a bot. But the genie is out of the bottle, and malicious actors aren’t known for paying heed to such rules once they have their hands on powerful technology…

Melancholy?

Given GPT-3 gains strength and wins acclaim by feeding on the breadth and depth of human knowledge, it will be interesting to see how fascinating we find it in the long run. As its content mirrors our own with increasing accuracy, producing pitch-perfect text, how will we feel as humans?

Regina Rini speculates:

It’s marvelous. Then it’s mundane. And then it’s melancholy. Because eventually we will turn the interaction around and ask: what does it mean that other people online can’t distinguish you from a linguo-statistical firehose? What will it feel like—alienating? liberating? Annihilating?

Winston Churchill once said, “we shape our building, thereafter they shape us.” The quote is sometimes updated with “technology” exchanged for “buildings.” With GPT-3 we have a technology that could deliver new, world-changing conveniences by lifting away a great number of text-based chores. But in creating these “efficiencies,” we must wonder where this will leave us as a species that has depended upon its unique mastery of language for millennia.

How will GPT-3 and its likes shape us? Does this even matter? As long as things get done?

In the end… what is all the fuss about?

This article was originally published on You The Data by Fiona J McEvoy. She’s a tech ethics researcher and the founder of YouTheData.com.

Get the TNW newsletter

Get the most important tech news in your inbox each week.