That’s a wrap on all things Google. In case you missed the keynote, here’s a recap of all the highlights you may have missed.

Android M

Kicking off the keynote, Google Senior Vice President Sundar Pichai announced that HBO Now is officially on the Play Store before bringing VP of Engineering (Android) Dave Burke to talk Android M.

Permissions

The first new feature is “App permissions,” simplifying what data users allow apps to access. In this new model, apps on Android M will no longer ask for a lengthy permissions list upon installation, but instead prompt the user for permission when the app needs to use a feature (i.e. camera or microphone).

Linking

Chrome Custom Tabs allows developers to add custom features that overlays on top of apps. For example, the Pinterest app can add custom transition animation to link to the Web, directly within the app. There’s also a new app linking feature that will allow apps to verify links to switch from app to app quickly.

Battery

A new “dozing” feature is designed to help save battery life when the device’s motion sensor is stagnant. Alarms and notifications will still push to the phone in this state, however. Meanwhile, Google also said USB Type C is coming to Android devices “soon.”

Google says apps will now also learn your sharing behavior to see who you share content with the most based on which app you’re using.

Android M Developer preview is available today for Nexus 5, 6, 9, and Player.

➤ Google unveils Android M

➤ Game of Thrones to-go? HBO Now headed to Google Play

Android Pay

Google says in its keynote that it will expand Android Pay by partnering with mobile providers to pre-install the feature on new devices. Partners include T-Mobile, Verizon and AT&T. To authenticate payments, you can use your fingerprint to verify your identity.

➤ Google announces Android Pay

➤ Google adds native fingerprint functionality for Android M launch

Android Wear

Some new Android Wear updates will include an “Always On” feature to keep apps on the screen so information is always glanceable in a low power black and white mode. Users no longer need to tap the screen to wake up the app.

New wrist gestures, such as flicking up and down to scroll, is added to help in case your hands are full. You can also draw emojis on an Android Wear watch now as well… if you’re feeling adventurous with your artistic skills.

At this time, Google says there are approximately 4,000 apps designed for Android Wear.

➤ Here’s the next big update to Android Wear

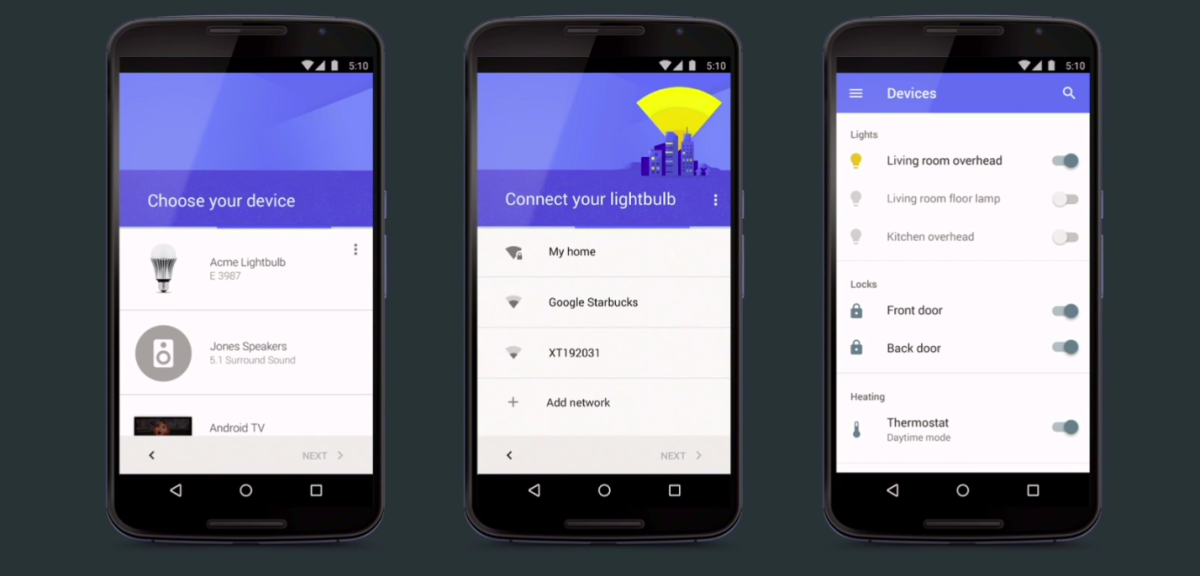

Internet of Things

Google is fully using its Nest acquisition to step into the Internet of Things market by announcing Project Brillo, its own operating system for IoT devices. Brillo is derived from Android to provide low power, wireless solution that can be easily scalable to all types of Android devices.

It’s also using its own language named Weave to communicate between the Brillo OS, a device and the cloud. Weave is available cross-platform.

Brillo will launch in developer preview in Quarter 3; Weave will come out in Q4.

➤ Brillo is Google’s operating system for the Internet of Things

Google Now’s “Now on Tap”

To make your smartphone more “smart,” Google says it is working to make Google Now even more contextual by learning your behaviors, habits and lifestyle. To assist you in your daily life, Google Now wants to provide proactive answers – such as estimating how long ride lines are when you arrive to Disneyland or where you parked your car.

To do so, Google Now teased a “Now on Tap” feature that will gain context based on what you’re looking at on your screen. For example, if Google Now knows you are traveling to San Francisco, it can provide your boarding pass upon your arrival to the airport, give you an option to order an Uber when you’ve landed or give you an option to order groceries when you’re en route.

In another example, you can listen to Skrillex on Google Music and pause to ask Google “What is his real name,” and it will know you mean to ask about the artist you’re currently listening to.

The final demo includes a text on Viber that mentions dinner at a restaurant, and Google Now opening OpenTable with the page of said restaurant open and ready for reservation. You can also ask to see what a menu item looks like in case you’re curious, and Google Now will know what dish you’re referring to based on what’s on your OpenTable screen.

➤ Google ‘Now on Tap’ makes it easy to get contextual answers from anywhere on Android M

Google Photos

We all take way too many photos, says Google, so its solution is Google Photos. The app will help back up your photos (unlimited and free!) and allow you to browse photos across a timeline. Additionally, it can also sort your photos based on People and Places without needing the user to add tags.

Finally, you can use Google Photos to make videos and collages based on suggestions the app gives according to location and date. It can also auto-create a movie based on recent content as well (of course, you can edit the content before you share).

A neat little gesture feature: You can also tap and drag to highlight photos you want to share instead of selecting each image one by one.

Google Photos is available today on Android, iOS and Web.

➤ Google launches Google Photos, a new service independent of Google+

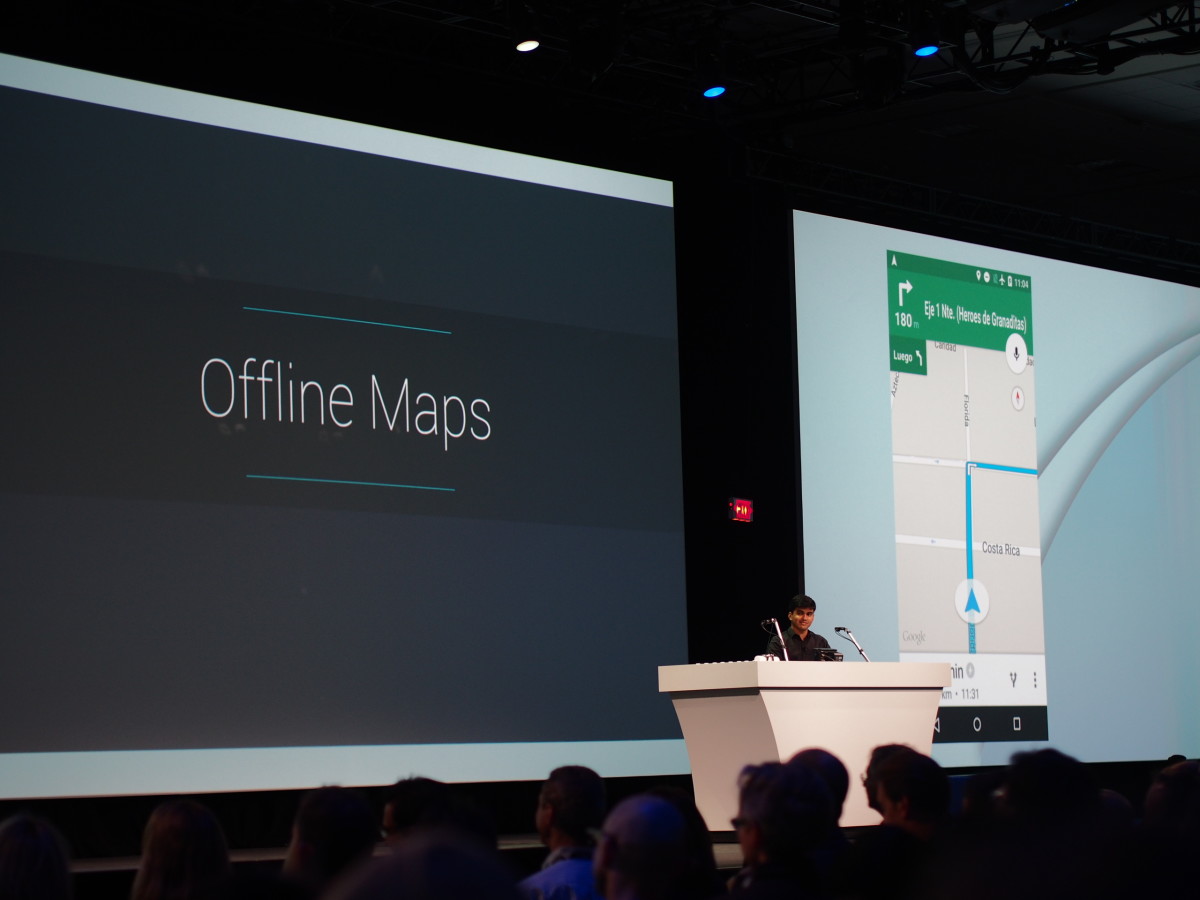

Offline connectivity

Because Android is available in so many parts of the world, Google talked about its efforts to make its apps available in countries where internet is limited. For example, it launched YouTube Offline in developing countries in the past few months, as well as offline support for Chrome.

Today, Google also introduced Offline Maps, allowing you to access Google Maps location search and directions without requiring an internet connection. It can also aggregate reviews so you can access this information entirely offline.

Offline Maps will be available “later this year.”

➤ Google announces offline Maps support, including navigation and reviews

Developer tools

Google quickly moved back to talking about developer stuff, introducing Android Studio 1.3 preview and Polymer 1.0. Android Studio offers full editing and debugging support for C++, and developers can access various SDKs via CocoaPods.

Additionally, Google will launch Cloud Test Lab to help automate app testing across 20 devices to provide crash reports.

Google will also launch Cloud Messaging to iOS, allowing users to subscribe to topics so they will receive only updates that are relevant to them. There are also a ton of new AdMob tools to help measure engagement and app install ads – including data like where your most valuable users come from. You can also test out your Play Store listing to see which graphics or copy works best.

Speaking of Play Store, Google is also reorganizing the way it displays search results. For example, searching for “shopping” no longer brings up the most popular shopping apps, but relevant sections such as “Coupons” and “Fashion.”

For parents, they can also look for a Family Star badge to find content that’s kid-friendly. They can even look for popular children entertainment characters and search for related content.

➤ Google is embracing CocoaPods to bring its services to iOS developers

➤ Google launches Universal App Campaigns for AdWords, updates Analytics and AdMob

➤ Google is making it easier to find family-friendly content on the Play Store

Android Nanodegree

Google announced a partnership with Udacity to offer a six-month course that teaches them all-things-Android. The course costs $200 per semester to obtain what’s called an “Android Nanodegree.”

➤ Android developer nanodegree now available from Google and Udacity

Virtual Reality

Announced during last year’s conference, Google announced that the Google Cardboard SDK for Unity will now support iOS. It’s got an improved viewer as well, and is overall easier to assemble.

There’s also a new “Expeditions” VR program that lets teachers control virtual field trip destinations from a tablet and allow students to explore new places from a Google Cardboard VR system.

Finally, Google announced “Jump,” a camera rig system that will allow anyone to record VR video. Essentially, it puts a camera – any camera you’d like – in a ring to record in 360 degree.

Google also partnered with GoPro to sell a jump-ready camera rig this year. YouTube will support Jump videos, with non-stereoscopic videos available to try this summer.

➤ The new Google Cardboard will fit your giant phone

➤ Google is bringing virtual field trips to classrooms with Cardboard Expeditions

Update: Here are some of the highlights unveiled on the second day of Google I/O 2015:

➤ Google’s Chromecast is about to get a lot better for gaming, dual-screen apps and binge-watching shows

➤ Google unveils Project Soli, a radar-based wearable to control anything

➤ Google is partnering with Levi’s for its Project Jacquard smart fabric

➤ With Project Vault, Google wants to make you the password

Thanks for following along with us – let us know what you’re looking forward to the most following this I/O conference!

[interaction id=”556763c51c915e9c563e4a4b”]

Get the TNW newsletter

Get the most important tech news in your inbox each week.