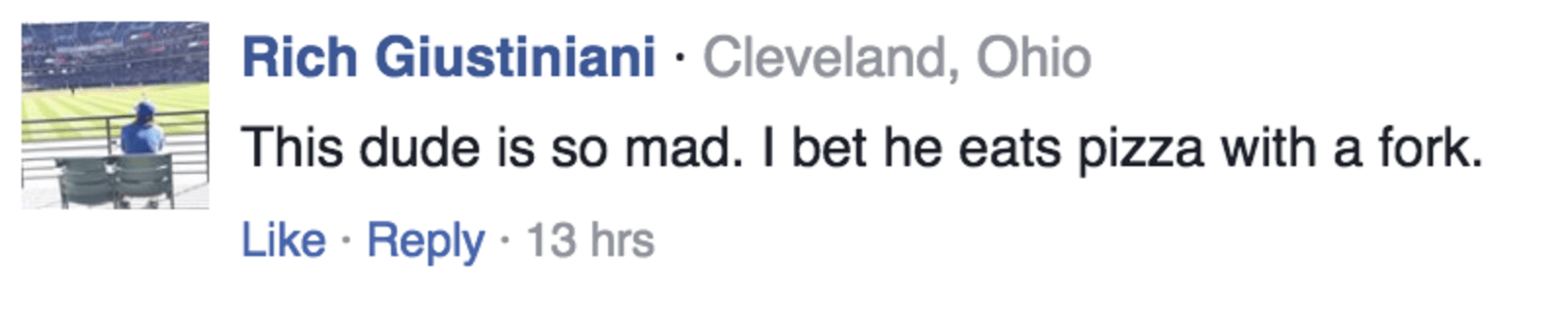

Trolls are everywhere online — we even have a few of our own. The problem, until now, has been in correctly identifying what constituted abusive language. As it turns out, even humans are pretty terrible at identifying abuse.

Yahoo’s newest algorithm is different. In 90 percent of test cases, it was able to correctly identify an abusive comment — a level of accuracy unmatched by humans, and other state-of-the-art deep learning approaches.

The company used a combination of machine learning and crowdsourced suggestions to build the algorithm after trawling the depths of the comment sections at Yahoo News and Finance — basically everything you’re told not to do online. This algorithm wasn’t looking for any particular keywords, however. Most AI targets specific words thought to be abusive, which often leads to sarcasm being flagged as abuse, or actual abusive comments that go un-flagged — depending on the filtering sensitivity.

Yahoo’s AI is different. It’s not looking for specific words, but words in combination with others, as well as overall post length, punctuation and other metrics to determine what constitutes abuse. Trained humans also rated the comments, a method used to help train the AI to spot the subtle nuances that it would have missed with a word-specific approach.

As a third step, Yahoo crowdsourced additional ratings from Amazon’s Mechanical Turk from humans that weren’t professional comment moderators. This group was the worst at spotting abuse.

The algorithm sill hasn’t been used outside of Yahoo’s existing dataset, but the company is confident that it’ll be a major step forward in the field of natural language processing. It’s already off to a good start, but the only real test is to let it loose outside of the original dataset.

Get the TNW newsletter

Get the most important tech news in your inbox each week.