Last night, the Internet Archive threw a party; hundreds of Internet Archive supporters, volunteers, and staff celebrated that the site had passed the 10,000,000,000,000,000 byte mark for archiving the Internet. As the non-profit digital library, known for its Wayback Machine service, points out, the organization has thus now saved 10 petabytes of cultural material.

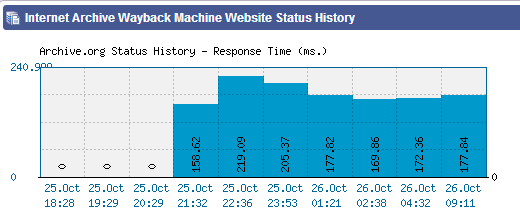

Put another way, that’s 10 million gigabytes or 10 thousand terabytes. You might be wondering why this news only came out today; there are two reasons: first off, Archive.org posted about it today. Secondly, and this may be the real reason everything was delayed, is that Archive.org was down for a good chunk yesterday:

What happened? This statement provides a hint:

The only thing missing was electricity; the building lost all power just as the presentation was to begin. Thanks to the creativity of the Archive’s engineers and a couple of ridiculously long extension cords that reached a nearby house, the show went on.

Anyway, in light of the milestone achieved yesterday and announced today, the organization also announced two more achievements: the first complete literature of a people went online (Balinese) and a “full 80 Terabyte web crawl to researchers.”

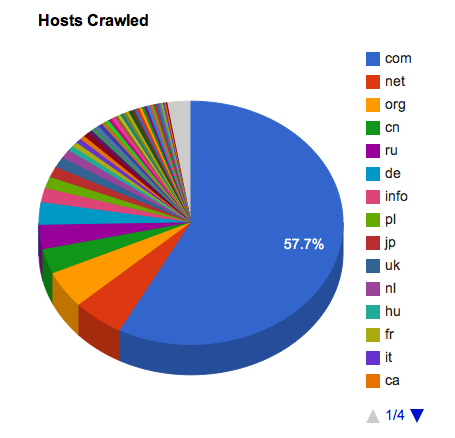

The Internet Archive says it is doing so because it wants to see how others might learn from all the content it accumulates. The crawl consists of WARC files containing captures of about 2.7 billion URIs from last year. The files contain text content and any media that the organization captured, including images, flash, videos, and so on.

Here are the technical details:

Crawl start date: 09 March, 2011

Crawl end date: 23 December, 2011

Number of captures: 2,713,676,341

Number of unique URLs: 2,273,840,159

Number of hosts: 29,032,069

The seed list for this particular crawl was a list of Alexa’s top 1 million Web sites, retrieved close to the crawl start date. The scope of the crawl was only limited by a few manually excluded sites.

Some points to note:

However this was a somewhat experimental crawl for us, as we were using newly minted software to feed URLs to the crawlers, and we know there were some operational issues with it. For example, in many cases we may not have crawled all of the embedded and linked objects in a page since the URLs for these resources were added into queues that quickly grew bigger than the intended size of the crawl (and therefore we never got to them). We also included repeated crawls of some Argentinian government sites, so looking at results by country will be somewhat skewed.

If you want to get access to the crawl data, contact info[at]archive[dot]org. The organization isn’t making any promises, but it does say it will consider everyone who explains why they want to use this particular archive.

Image credit: Jenny Sliwinski

Get the TNW newsletter

Get the most important tech news in your inbox each week.