The hand-tracking Leap Motion hasn’t sparked the interest of the average person. But, in the hands of savvy startups with an eye on improving the lives and safety of others, it looks like it’s found its calling.

The startups presented at the first Leap Axlr8r demo day, during which each of the teams used the Leap Motion hardware for their apps. The accelerator is put together by Founders Fund and SOSventures and based on the what was presented, we should expect more demo days from Leap Axlr8r by companies powered by the Leap Motion.

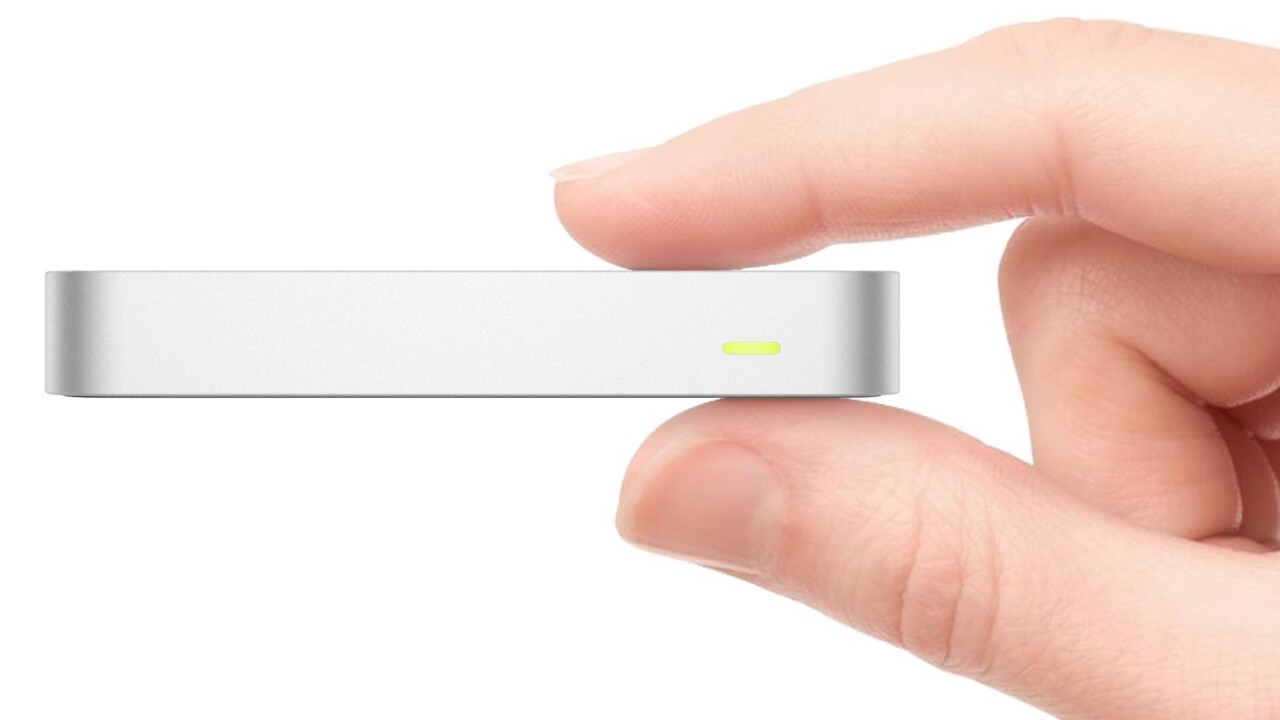

The technology behind the Leap Motion is impressive. It’s outstanding at tracking your hands and individual fingers. The bad thing is that keeping your hand and arm poised above a sensor to interact with a computer or application is tiring. The good thing is that at the Leap AXLR8R demo day, three startups looked beyond the expected drawing and navigation applications and presented technology, that while niche, shows the true power of the Leap Motion.

From translating American Sign Language to keeping troops out of harms way, these startups are doing more than just creating a one-trick pony apps with the hopes of being purchased by Google, Yahoo or Facebook. These companies are bundling software and hardware to help people. And of course make money along the way.

Mirror Training

The remote control robots the armed forces use that diffuse bombs are great at getting to a bomb. Once there though, the robotic arm that places the disruptor charge (a small explosive that disables the actual bomb) can be cumbersome. It uses controls with levers to maneuver the hand-like claw to position the charge. It’s slow, tedious work when timing can mean the difference between life and death.

The remote control robots the armed forces use that diffuse bombs are great at getting to a bomb. Once there though, the robotic arm that places the disruptor charge (a small explosive that disables the actual bomb) can be cumbersome. It uses controls with levers to maneuver the hand-like claw to position the charge. It’s slow, tedious work when timing can mean the difference between life and death.

Mirror Training wants to speed up the process and has created a system that tracks a soldier’s hand to control the robot hand. The act of moving the robot’s hand and grabbing an object is as simple as moving your own hand above a Leap Motion. A camera on the robot hand is used to see the action.

“No one has to tell you how to use your arm,” said company president Liz Alessi when talking about how easy it is to use the system.

The AARC (Anthropomorphic Augmented Reality Controller) is plug and play and will work with all current and future robotic arm systems. It’s currently being tested by ROTC students and the company is hoping to have the system ready for to sell by the end of the year.

Motion Savvy

Communication between the deaf community and hearing community is still difficult. The average hearing individual doesn’t understand sign language and is unlikely to learn. Soon, that might not be an issue. The Motion Savvy tracker and translator solves the communication barrier by converting the gestures of sign language into speech. Frankly, it’s one of the most impressive uses of motion tracking technology I’ve seen. Plus, it’s genuinely useful.

“We’re doing this to change the world and doing this to change our lives,” says CEO and co-founder Ryan Hait-Campbell.

The Motion Savvy tablet case houses a Leap Motion and enables two-way communication between the deaf and hearing. Hand gestures are translated into speech and the spoken word is translated into text that can be read on the tablet’s screen. Campbell cites communication issues as the underlying problem of high unemployed among the deaf. A World Health Organization study cites not only higher unemployment among the deaf population, but also in some countries deaf children are less likely to attend school.

The Motion Savvy team hopes to sell the case to governments in addition to individuals. The whole system will cost $600 with a $19.99 a-month subscription. It’s currently in the alpha stages, but hopes to hit public beta in January 2015 with a smartphone case expected in February 2016 for even more portability.

Diplopia

James Blaha was born with a lazy eye. As a child he went through the agonizing treatments of wearing an eye patch or staring down a string of balls. Neither worked. Then he saw the Oculus Rift and decided to take it upon himself to create a game that would give him the ability to see in 3D something traditional therapy failed to accomplish.

James Blaha was born with a lazy eye. As a child he went through the agonizing treatments of wearing an eye patch or staring down a string of balls. Neither worked. Then he saw the Oculus Rift and decided to take it upon himself to create a game that would give him the ability to see in 3D something traditional therapy failed to accomplish.

“I hacked my own brain and it worked. And it didn’t just work for me, it worked for other people too,” said during his demo of his game Diplopia. Instead of presenting a stereoscopic image like other 3D games, it presents each eye with a different elements of the game. By doing so it forces the lazy eye to work in tandem with the regular eye and Blaha says he was able to see 3D for the first time after playing the game.

Blaha reports that every person that’s played Diplopia for over three hours has a measured improvement and have developed the ability to see 3D in real life. The game treats both Strabismus (crossed eye) and Amblyopia (lazy eye).

In addition to the Oculus Rift, the game uses the Leap Motion as a controller. It’s a convergence of two impressive pieces of technology that may not gain wide acceptance, but can offer up possibilities beyond their original intention.

It’s the natural evolution of technology; something more than what the originators intended. In these cases, that story has led to helping others and making their lives easier, which is the point of technology in the first place.

Get the TNW newsletter

Get the most important tech news in your inbox each week.