What if a run-of-the-mill photo could actually employ an algorithm that would make it look like a Van Gogh or a Picasso?

A new paper published in the journal Arxiv by researchers from the University of Tubingen in Germany entitled, A Neural Algorithm of Artistic Style, may have a dry scientific name but points to this inspired outcome.

Note that this technology is not ready for prime time yet and is not included in any available app, but the demo illuminates what could be possible by employing Deep Neural Network vision models — essentially a combination of object and face recognition.

The paper offers the following explanation of purpose:

Here we introduce an artificial system based on a Deep Neural Network that creates artistic images of high perceptual quality. The system uses neural representations to separate and recombine content and style of arbitrary images, providing a neural algorithm for the creation of artistic images.

Moreover, in light of the striking similarities between performance-optimised artificial neural networks and biological vision, our work offers a path forward to an algorithmic understanding of how humans create and perceive artistic imagery.

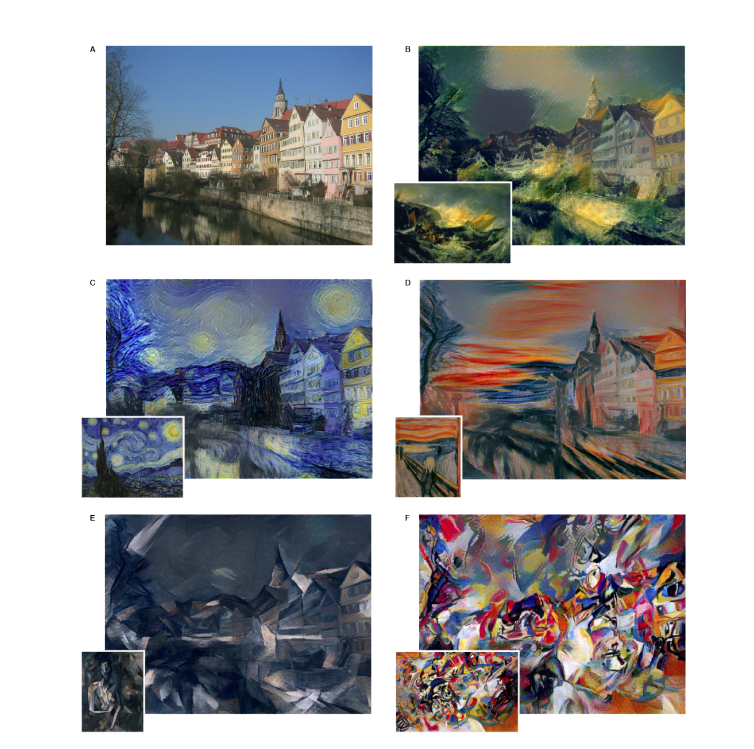

As an example, the group used a simple street image that anyone could have shot, using the algorithm to reproduce it in the style of several artistic masters: Vincent van Gogh, Pablo Picasso, Wassily Kandinsky, J.M.W. Turner and Edvard Munch.

Why do this?

In general, our method of synthesising images that mix content and style from different sources, provides a new, fascinating tool to study the perception and neural representation of art, style and content-independent image appearance in general.

We can design novel stimuli that introduce two independent, perceptually meaningful sources of variation: the appearance and the content of an image. We envision that this will be useful for a wide range of experimental studies concerning visual perception ranging from psychophysics over functional imaging to even electrophysiological neural recordings.

Many scientists are fascinated by the capabilities of Deep Neural Networks. Google has been working on a similar project with DayDream, and RealMac recently let loose an experimental app called Deep Dreamer, which borrows from Google’s code, to explore various aspects of this technology.

Generally, having computers help interpret and categorize visual data would be of great help in analyzing the vast quantities of information bombarding us every day. Moreover, the demonstrated separation of appearance and content could pave the way for computers to “see” more like humans do.

You can read the paper for yourself and fantasize about your next career.

➤ This New Algorithm Gives Photos the Look of Famous Paintings [via Arxiv.org]

Get the TNW newsletter

Get the most important tech news in your inbox each week.