Why should a flying robot need to see the world the same way we human beings do?

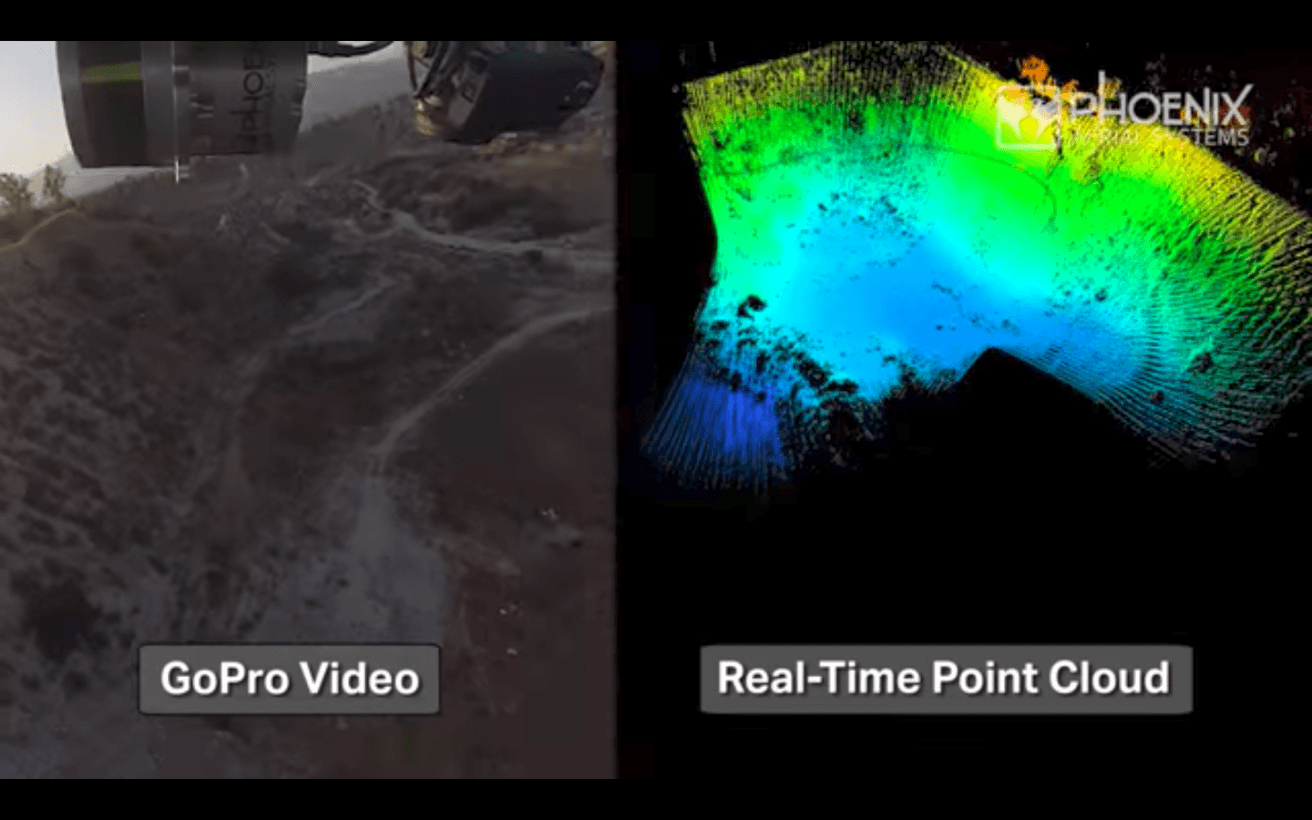

Conventional cameras only scratch the surface of what’s possible for drone vision systems. The simple truth is that there are other categories of visual sensors ready to grant autonomous platforms new ways of interfacing with the world. LIDAR, for example, uses lasers to build full environmental awareness in total darkness, and thermal imaging has applications ranging from law enforcement to agriculture.

These categories are still being expanded today, most recently by engineers at Stanford University and the University of California San Diego. This team has developed a single-lens light field camera with a wide field of view — a first-of-its-kind “four-dimensional” camera that captures images containing an extra layer of information. This novel way of interacting with the world makes it especially adept for drone navigation tasks. Rather than consider light as coming through a tiny peephole, a light field camera treats like as if it is coming through a window.

“[Our camera] makes it much easier to perform traditionally complex tasks like estimating camera motion and scene shape, and detecting obstacles and moving objects,” says Donald Dansereau, Stanford postdoctoral scholar. “Conventional approaches to these problems tend to be iterative, power-hungry, and non-deterministic, meaning you’re never sure when they’ll finish. Using our camera allows low-power, closed-form approaches that have a fixed and fast runtime.”

In other words, the team’s camera eases the burden on drone navigation software, reducing the latency and flight errors that threaten safe navigation and landing in a complex environment; by changing the way drones see the world, Danserau’s team’s camera lets them fly in a faster, simpler, better-behaved manner.

Different vision systems can meet other goals. Operating in partnership with Hewlett-Packard Enterprise, the small San Francisco-based company CrowdOptic has developed an airborne camera system that ascertains the GPS coordinates of grounded objects within its field of vision. Imagine a flying point-and-shoot camera that, instead of generating a still photograph, yields the location data for an object of interest.

“We have something very special,” says CrowdOptic CEO Jon Fisher. “We’ve solved every meaningful trigonometry problem related to drones and vision.” To hear him tell it, drones will need to answer the same question posed by driverless cars: how are these things going to relate to each other? It’s not only a question of safety, keeping unpiloted drones from colliding with each other, but one of maximizing potential by seeing them operate at capacity.

The smartphone industry has ushered in a renaissance for sensor technology; never before have these electronic components been so simultaneously cheap, plentiful, and effective. Drone enthusiasts are surely benefitting from the boom — the more “high-res” their interactions with the world can be, the more likely it is that their flying machines will eventually interact with and coordinate with other devices.

As drone sensor quality improves, get ready for the Internet of Things to learn how to fly.

Get the TNW newsletter

Get the most important tech news in your inbox each week.