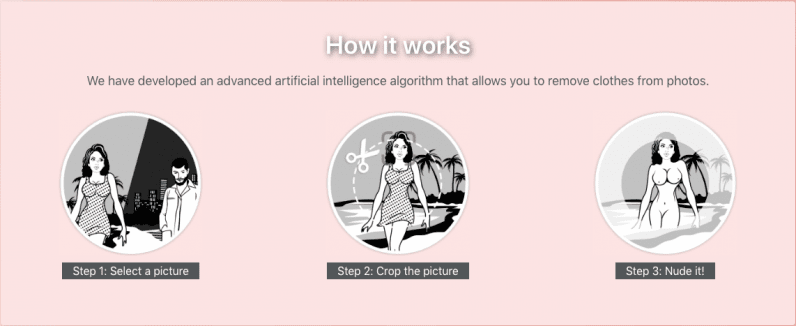

If you think creating anyone’s nude photos or deepfake was a difficult task, think again. An app called DeepNude has developed a scary AI that can create a nude picture out of a woman’s fully clothed photo.

First reported by Vice, the Windows and Linux app was launched in March. DeepNude can take any clothed photo of a woman and turn into a realistic nude picture using its AI.

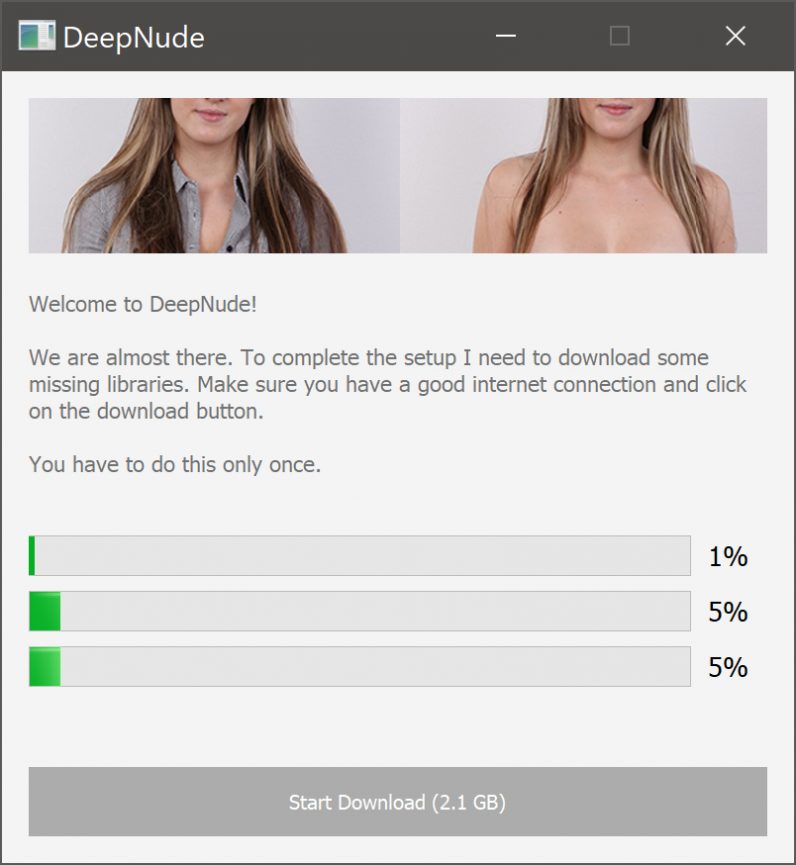

The app is free to download and try, but you’ll need to pay $50 for the ability to remove its large watermark and export the results. It works offline and requires a 155MB download, as well as 2.1GB of additional files for its libraries before you can use it.

We tried the free version of the app with a few pictures, and it worked to some degree (full-length dresses threw the app for a loop). But the results are sometimes enough for bad actors to use to harass people.

The unnamed creator of the app told Vice that the AI is based on the Pix2Pix algorithm developed by researchers from the University of California, Berkeley, that turns black and white images into color images. He trained the model with over 10,000 images of nude women, and is hoping to create a male version of this as well.

He told the publication that he created the app out of curiosity and just for “fun”:

I’m not a voyeur, I’m a technology enthusiast. Continuing to improve the algorithm. Recently, also due to previous failures (other startups) and economic problems, I asked myself if I could have an economic return from this algorithm. That’s why I created DeepNude.

The creator defended himself saying that anyone can do this with few tutorials of Photoshop. But from his statement, it appears that he isn’t cognizant of the fact that this could allow people to harm women by manipulating their photos far more easily than ever before. We’ve sent an email to DeepNude to learn more about the algorithm and its intentions.

Deepfakes have been around for a couple of years, and mainly to create porn videos featuring women and celebrities. We’ve recently covered advancements in this field that allows you to edit a video just by altering the transcript or create a talking head video using only one image.

Despite researchers claiming that new algorithms will help professionals in various ways, examples like DeepNude show that right now, it’s causing more harm and chaos than benefiting the world.

Update: Readers correctly identified we should not have been linking to this awful, awful app. That was irresponsible. We’ve removed the links, scrubbed the cache, and very much regret the error.

Get the TNW newsletter

Get the most important tech news in your inbox each week.