Theoretical physics isn’t the easiest field in science to translate into laymen’s terms. Until recently it’s solely been the domain of geniuses like the late Stephen Hawking and fictional characters such as The Big Bang Theory’s Sheldon Cooper. As companies like Google, IBM, and Intel work to build quantum computer systems that offer supremacy over binary computers, it’s a good time to learn some basic terms and concepts. We’ve got you covered fam.

Quantum computers are devices capable of performing computations using quantum bits, or qubits. You can learn why they’re important here, and read some fun and wacky facts about them here.

Qubits

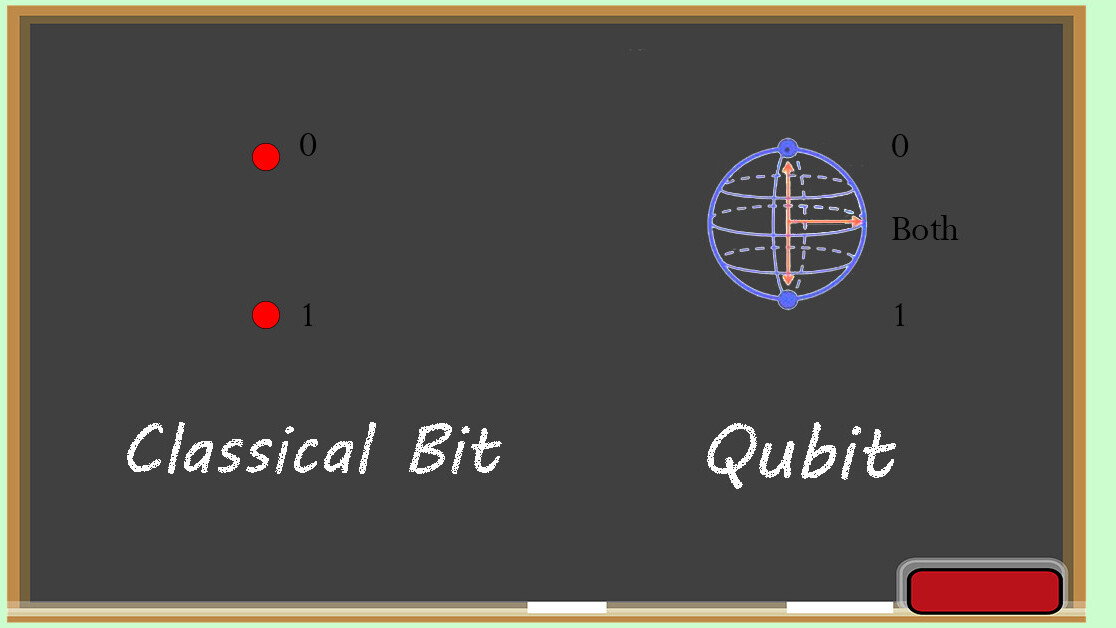

The first thing we need to understand is what a qubit actually is. A “classical computer,” like the one you’re reading this on (either desktop, laptop, tablet, or phone), is also referred to as a “binary computer” because all of the functions it performs are based on either ones or zeros.

On a binary computer the processor uses transistors to perform calculations. Each transistor can be on or off, which indicates the one or zero used to compute the next step in a program or algorithm.

There’s more to it than that, but the important thing to know about binary computers is the ones and zeros they use as the basis for computations are called “bits.”

Quantum computers don’t use bits; they use qubits. Qubits, aside from sounding way cooler, have extra functions that bits don’t. Instead of only being represented as a one or zero, qubits can actually be both at the same time. Often qubits, when unobserved, are considered to be “spinning.” Instead of referring to these types of “spin qubits” using ones or zeros, they’re measured in states of “up,” “down,” and “both.”

Superposition

Qubits can be more than one thing at a time because of a strange phenomenon called superposition. Quantum superposition in qubits can be explained by flipping a coin. We know that the coin will land in one of two states: heads or tails. This is how binary computers think. While the coin is still spinning in the air, assuming your eye isn’t quick enough to ‘observe’ the actual state it’s in, the coin is actually in both states at the same time. Essentially until the coin lands it has to be considered both heads and tails simultaneously.

A clever scientist by the name of Schrodinger explained this phenomenon using a cat which he demonstrated as being both alive and dead at the same time.

Observation theory

Qubits work on the same principle. An area of related study called “observation theory” dictates that when a quantum particle is being watched it can act like a wave. Basically the universe acts one way when we’re looking, another way when we aren’t. This means quantum computers, using their qubits, can simulate the subatomic particles of the universe in a way that’s actually natural: they speak the same language as an electron or proton, basically.

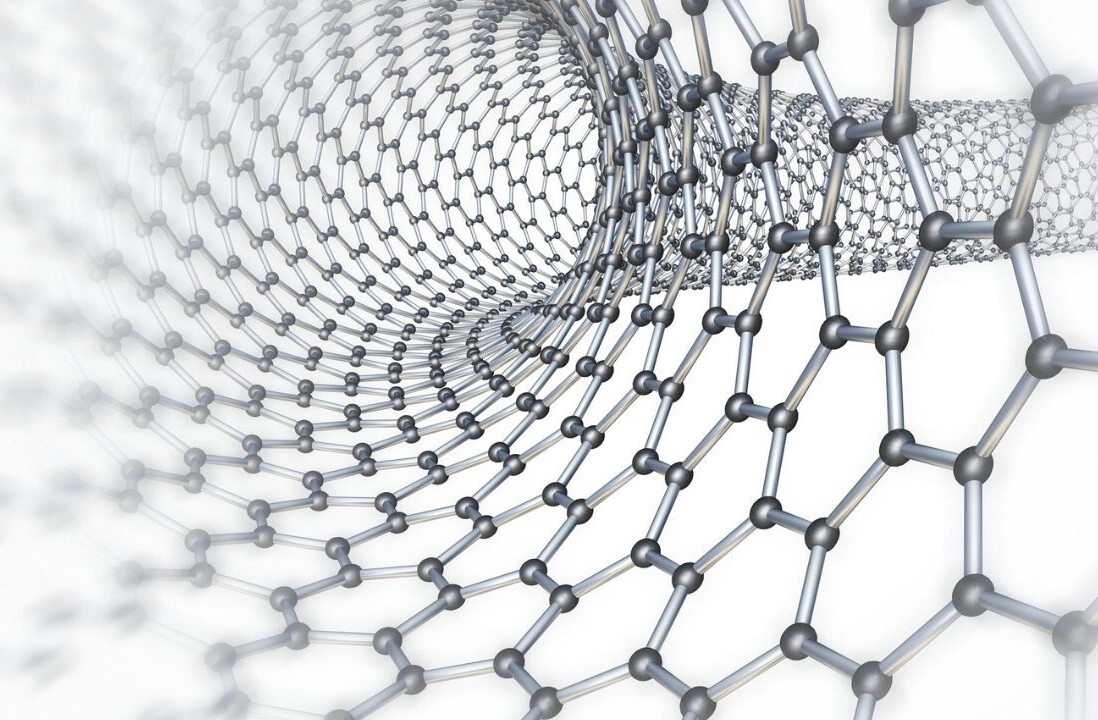

Different companies are approaching qubits in different ways because, as of right now, working with them is incredibly difficult. Since observing them changes their state, and using them creates noise – the more qubits you have the more errors you get – measuring them is challenging to say the least.

This challenge is exacerbated by the fact that most quantum processors have to be kept at near perfect-zero temperatures (colder than space) and require an amount power that is unsustainably high for the quality of computations. Right now, quantum computers aren’t worth the trouble and money they take to build and operate.

In the future, however, they’ll change our entire understanding of biology, chemistry, and physics. Simulations at the molecular level could be conducted that actually imitate physical concepts in the universe we’ve never been able to reproduce or study.

Quantum Supremacy

For quantum computers to become useful to society we’ll have to achieve certain milestones first. The point at which a quantum computer can process information and perform calculations that a binary computer can’t is called quantum supremacy.

Quantum supremacy isn’t all fun and games though, it presents another set of problems. When quantum computers are fully functional even modest systems in the 100 qubit range may be able to bypass binary security like a hot knife through butter.

This is because those qubits, which can be two things at once, figure out multiple solutions to a problem at once. They don’t have to follow binary logic like “if one thing happens do this but if another thing happens do something else.” Individual qubits can do both at the same time while spinning, for example, and then produce the optimum result when properly observed.

Currently there’s a lot of buzz about quantum computers, and rightfully so. Google is pretty sure its new Bristlecone processor will achieve quantum supremacy this year. And it’s hard to bet against Google or one of the other big tech companies. Especially when Intel has already put a quantum processor on a silicon chip and you can access IBM’s in the cloud right now.

No matter your feelings on quantum computers, qubits, or half-dead/half-alive cats, the odds are pretty good that quantum computers will follow the same path that IBM’s mainframes did. They’ll get smaller, faster, more powerful, and eventually we’ll all be using them, even if we don’t understand the science behind them.

Want to hear more about AI from the world’s leading experts? Join our Machine:Learners track at TNW Conference 2018. Check out info and get your tickets here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.