The first “this could change everything” AI story of the year comes to us in the form of (yet another) AI that’s supposed to read minds. This time however, there’s no parlor trick. We’re one step closer to being able to broadcast our thoughts to a screen, thanks to artificial intelligence.

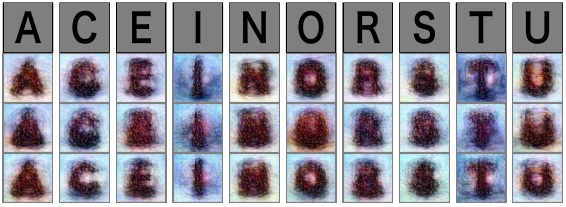

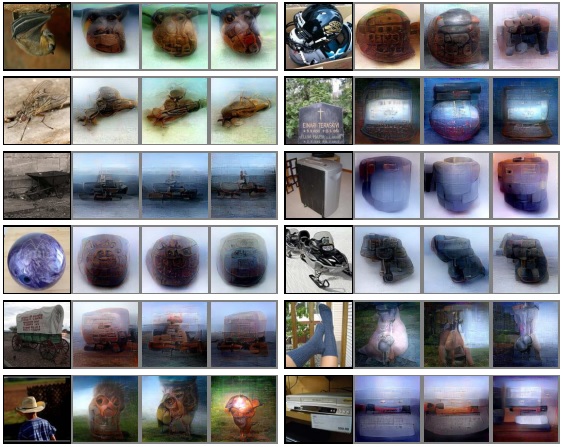

Japanese scientists have created AI capable of reading a person’s brainwaves and displaying an image based on what they’re looking at. If a person is staring at a picture of the letter “A” the AI will successfully create an image that resembles a fuzzy version of that. It’s actually reading the person’s mind – sort of.

The scientists published their paper “Deep image reconstruction from human brain activity” wherein they state:

Here, we present a novel approach, named deep image reconstruction, to visualize perceptual content from human brain activity.

Over a 10 week period the scientists showed images to human test subjects and recorded their brainwaves. At times the subjects brains were monitored in real-time while they were looking at the images, other times they were asked to “recall” the images. The researchers used the brain scans to train a deep learning network to “decode” the data and visualize what the person was thinking about.

When these machines are learning to “read our minds” they’re doing it the exact same way human psychics do: by guessing. If you think of the letter “A” the computer doesn’t actually know what you’re thinking, it just knows what the brainwaves look like when you’re thinking it.

It visualizes your thoughts by guessing what output we want to see based on the data from our brains — unlike human “psychics” whose guesses are based on, we’ll just say: less scientific data.

AI is able to do a lot of guessing though — so far the field’s greatest trick has been to give AI the ability to ask and answer its own questions at mind-boggling speed.

The machine takes all the information it has – brainwaves in this case – and turns it into an image. It does this over and over until (without seeing the same image as the human, obviously) it can somewhat recreate that image.

For now, it’s obviously not perfect – but it’s almost certain to be a use-case for the field of deep learning that sees extensive development.

That’s when things get interesting.

One day technology like this may turn our minds into projectors or allow us to share streaming footage of our actual dreams with one another. It’s difficult to imagine the ramifications of a technology that could make our brains the penultimate computer input device.

This could enable understanding without communication: the ability for humans to gain knowledge from machines or other humans instantly.

It could also destroy the idea of privacy, ruin poker forever, and start World War III, but that’s a different article.

Get the TNW newsletter

Get the most important tech news in your inbox each week.