Rajat Harlalka is a Product Manager with over eight years of experience in the mobile industry primarily in technology, product management and marketing role.

We often see sci-fi movies portray smart devices which can interact with human characters as if they were their companion. With increasing sophistication of devices, especially smartphones, this may become a reality sooner than most expect.

The introduction of voice has already started happening in several mobile apps, and presents a tremendous opportunity for developers. This indeed seems like a paradox, as a few years ago text messages and apps had brought down voice revenues for operators. But now, voice presents a plethora of opportunities for these apps.

Why speech?

There are hundreds of apps that let users search, write emails, take notes and set appointments with their smartphone. But, for some people, the small size of a phone’s keyboard or touch screen can be limiting and difficult to use.

Speech is ideally suited to mobile computing, as users tend to be usually on the move and speech can not only take commands but also read out messages. Not to say that voice based apps will improve accessibility for a person with a disability. The technology isn’t quite there yet, as we see with Apple’s digital personal assistant, Siri. Still, her existence is one leap in that direction.

Progress in technologies needed to help machines understand human speech, including machine learning and statistical data-mining techniques, is fuelling the adoption of speech in smart devices.

Successful speech implementations

There have been several successful case studies of successful implementation or introduction of voice to apps. Ask.com, a question answering-focused web search engine, has integrated Nuance’s speech recognition technology into its iOS and Android apps. This linkage lets users use their voice to ask and answer questions as well as comment on answers.

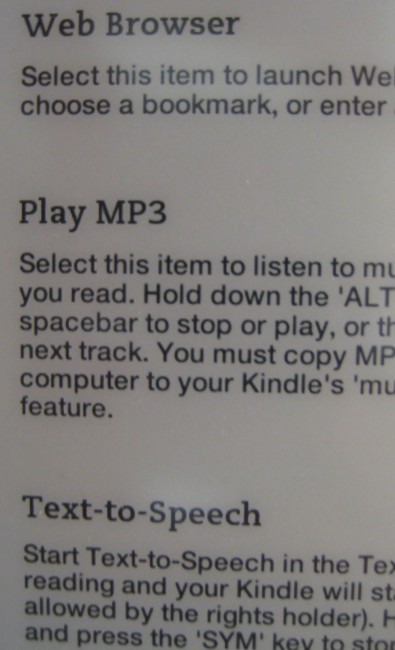

Amazon has also updated its Kindle iOS app with support for Apple’s “VoiceOver” reading and navigation feature for blind and visually impaired users of the iPhone and iPad.

Amazon has also updated its Kindle iOS app with support for Apple’s “VoiceOver” reading and navigation feature for blind and visually impaired users of the iPhone and iPad.

Amazon mentioned that more than 1.8 million e-books will support the technology, which automatically reads aloud the words on the page. The decision to use Apple’s VoiceOver is notable in part because Amazon had previously acquired a company, IVONA Software that provides Kindle “text to speech” and other features.

Earlier this year, a startup called Joyride that aims to make voice activated mobile apps to make driving safe and social, had raised $1 million in funding. The venture aims to make full-blown rich mobile application that is 100 percent hands-free and brings all the social fun pieces of the Internet to you while you are driving.

Another startup – Nuiku, whose goal is to build apps that are virtual assistants for specific roles, has raised $1.6 million seed round recently.

A recent survey by Forrestor also indicates the growing popularity of voice control apps among mobile workers. A majority of those who use voice recognition use it to send text messages, 46 percent use voice for searches, 40 percent use it for navigation, and 38 percent use voice recognition to take notes.

Integrating speech

The speech technology is in fact built in two parts. The first is a speech synthesizer (often referred to as Text to Speech or TTS in short) that the device or app can use to communicate with user –for example, read text on demand or keep users informed about the status of a process.

The second is a speech-recognition technology that allows users to talk to the app to send commands to it or a message/email dictation — what we usually do with a keypad. An ideal voice application should include both, however for starters, it is a good idea to start with one to get a knack of it.

At first glance, implementing a speech synthesizer/recognizer library may seem like a relatively simple task of determining the phonetic sounds of the input and outputting a corresponding sequence of audible signals. However, it is in fact quite difficult to produce intelligible and natural results in the general case.

This is because of linguistic and vocalization subtleties at various levels that human speakers take for granted when interpreting written text. In fact, the task requires a considerable grasp of both Natural Language Processing and Digital Signal Processing techniques.

To develop a worthwhile speech algorithm from scratch would take tens of thousands of programming hours, hence it makes sense to use one of the several existing tools for it. There are a number of technologies in the marketplace to speech-enable apps. One thing to figure out before choosing your speech SDK, is the deployment model. There are primarily two deployment models:

Cloud: In this case, the Automatic Speech Recognition (ASR) or Text to Speech (TTS) happens in the cloud. This offers significant advantage in terms of speed and accuracy, and is the commonly used mode.

This also means that the app will require Internet connection at all times. On the positive side, the app will be lightweight.

Embedded: In an embedded mobile speech recognition or TTS, the entire process happens locally on the mobile device. When the voice feature is completely embedded, the app can work offline but becomes very heavy.

For instance, TTS engines use a database of prerecorded voice audio where there is a clip for every possible syllable. Using it offline means including all these clips inside your app. In case of IVONA voices, developers can download voice data for US English (Kendra, female) or UK English (Amy, Female) – each has data size of approximately 150 MB. Another advantage of such systems is that they are not affected by the latency that’s involved with transmitting and receiving information from a server.

Popular speech libraries

Nuance is perhaps the most popular provider of speech libraries for mobile apps. Nuance’s app Dragon Dictation, a simple speech-to-text app that is free on iOS, is probably the best known speech app. The app sends a digital sample of your speech over the Internet to do the speech recognition, so it requires a wireless connection. But this process is speedy, and the app soon displays your transcribed text in the main window.

Apple, Google and Microsoft all now offer direct speech-to-text recognition in their mobile operating systems, and you can use those features wherever there is an option to type in text. One new API available to app developers in iOS 7 is Text to Speech.

Apple, Google and Microsoft all now offer direct speech-to-text recognition in their mobile operating systems, and you can use those features wherever there is an option to type in text. One new API available to app developers in iOS 7 is Text to Speech.

In the past, developers would have to integrate their own text to speech solution, which adds time and cost to development and makes apps larger. With iOS 7, Apple is integrating an API that will allow developers to make their apps speak with just three lines of code. Not only will it allow iOS app developers to implement speech in their apps with ease, it will also work in Safari for developers building web applications.

OpenEars is an offline text-to-speech and speech-to-text Opensource library, and supports Spanish as well as English. Like other offline libraries, it can drastically enlarge the app size (since the OpenEars is more than 200MB). However, developers can reduce their final app size by doing away with voices or any features of the framework that they aren’t making use of. Depending on which voices they’re using and which feature, they might see an app size increase of between 6 MB and 20MB unless they’re using a large number of the available voices.

Other popular SDKs include Ivona, iSpeech, Vocalkit and Acapela. All the three are online and paid.

Based on their requirements, developers can select any of these libraries in their apps. The key metric to look at is efficacy of the solution versus total cost of ownership. As these tools become more adept in handling problems such as cocktail party problem, context awareness, various accents and stutters handling etc., the user experience of using speech in apps will also get better.

Developers, however, need to take care of some things when embedding speech. They shouldn’t use speech everywhere as of now, and can start at places where changes of error are low or perhaps where the impact will be high (e.g. filling in a form).

Trying to speech-enable the entire app at once can be too big a problem to solve. Rather, it makes sense to voice-enable a couple of the killer use cases first. Find out what are the primary methods or functions that people use in your app and voice-enable these first. It’s an iterative process, and the rest of the app can be speech-enabled or speech controlled in a phase II or beyond development cycle.

Similarly, if the app does not contain a lot of text that needs to be read out, using simple audio clips may make more sense rather than using a TTS engine.

If you want to track just few keywords, you should not look for speech recognition API or service. This task is called Keyword Spotting and it uses different algorithms than speech recognition. Speech recognition tries to find all the words that has been said and because of that it consumes way more resources than keyword spotting. Keyword spotter only tries to find few selected keywords or key phrases. It’s simple and less resource-consuming.

Conclusion

The potential for voice in apps is immense. An instance is including it in language learning apps to as an aid to handicapped users. In the future, wearable devices will fuel adoption of speech in mobile apps. While it may be some time before speech will replace touch as primary input to smartphone apps, developers need to start considering whether and how to add speech control to their apps to stay competitive.

When I was in college and often working on my laptop, my mom often remarked, “Kids today will forget how to write with a pen. They will just type.” Well, mom, tomorrow’s kids are not even going to type. They’ll just speak.

Image credit: Brian A Jackson/Shutterstock

Get the TNW newsletter

Get the most important tech news in your inbox each week.