A couple of days ago the news broke that Apple had acquired indoor location startup WiFiSLAM. Since the company immediately pulled its website and Apple isn’t exactly forthcoming about its plans for its acquisitions, much of the chatter since then has been speculation.

But, thanks to a video of a detailed WiFiSLAM presentation (thanks Brian) at a Geo Meetup, we now have more information about the exact technologies that WiFiSLAM was using to pull off its indoor magic. We also know what SLAM means and why Apple was so interested in this particular company.

Apple has been gathering location data based on trilateration (or triangulation) of WiFi and cellular signals for some time. You may recall the location database mini-debacle of 2011, when it was made clear that Apple, Google and other companies used WiFi hotspots and cell towers to track users and improve their networks. That blew over in the end as the companies made better efforts to turn the data over and ensure that it was, indeed anonymous and impossible to access outside of those ID-free stores.

But those tried and true trilateration methods continue to be in use today, mostly to augment the accuracy and speed of GPS locations. That’s where WiFiSLAM comes in. Its system uses a handful of technologies including WiFi signals, GPS and more to craft more accurate positions and maps, especially when users are indoors.

WiFiSLAM’s Jason Huang, a former Google Intern, lays out the strengths and weaknesses of GPS and how it can be augmented with other systems.

The typical accuracy of GPS when it’s performing well is around 10 meters, points out Huang. This means almost nothing when it comes to traveling down a road in a car, but 10 meters vertically in a building could mean a difference of 6 floors.

“Getting the floor wrong by six or seven floors is a huge nuisance if you’re trying to do interesting things with location,” says Huang.

WiFiSLAM uses a combination of various methods to get better indoor locations. Obviously, WiFi and cell tower trilateration doesn’t work indoors. Instead, WiFi signals can be measured by any device to get an approximate location. In order for that location to be accurate, though, you have to use WiFi fingerprinting to get an idea of what the materials and construction of a particular building are going to do to WiFi signals. Enough scans in one place and you’ll have an accurate profile of a building that can be used to make a map.

The goal of WiFiSLAM and its SDK is “to try to make indoor location as accessible as possible to anyone who wants to try it,” says Huang.

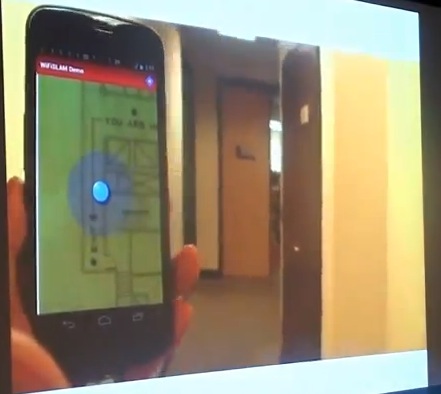

In around 90 seconds, you should be able to take a picture of a map, walk to one point of each location you want to log and upload it to a server. This process uses any hotspot that responds to probe requests. Those include hidden and password protected hotspots, as you’re only using the strength of the signal.

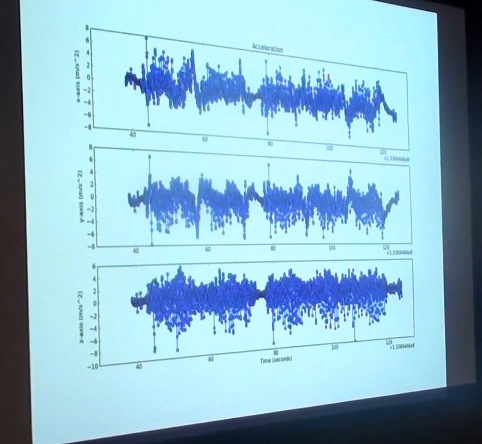

The SLAM acronym? That stands for Simultaneous Localization and Mapping. This encompasses WiFiSLAM’s way of gathering location and mapping information without recording any data at all and pairing with more traditional methods. To do this they record ‘trajectories’ from sensors on the phone including the accelerometers, gyroscopes and magnetometers.

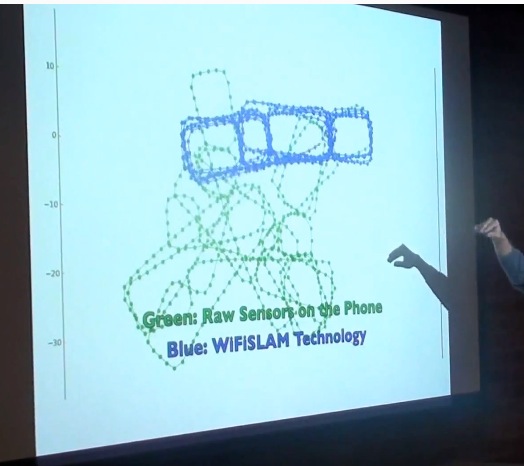

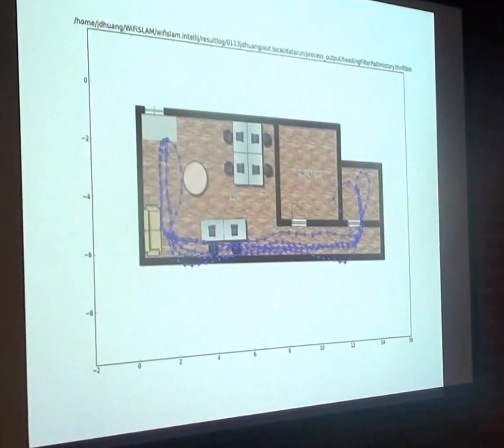

The green is raw trajectories, using just inertial sensors. These are the ones inside your phone including your accelerometers. The Blue is SLAM. If you record enough of these trajectories (note that it doesn’t have to be just one person, which is important) and mate them with WiFi data, you can start to build more accurate indoor maps.

To aggregate the data, you track the paths walked by various users (anonymously) and build a big database of paths and maps for the inside of buildings.

“The common wisdom,” says Huang, “is that smartphone sensors are cheap and that you can’t get any meaningful data from them.”

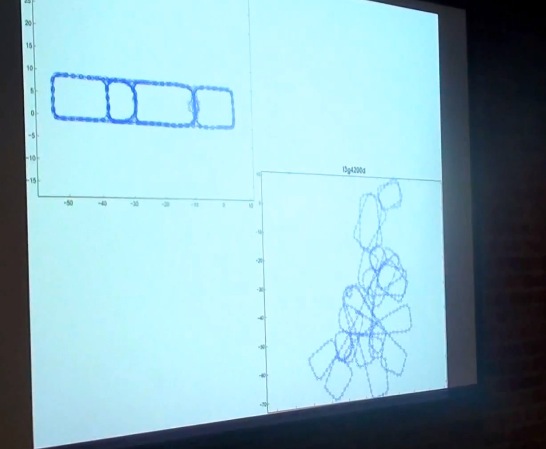

To illustrate, here’s a recording using off the shelf GPS software. The top is the actual path and the bottom is the recorded path. You can see that they don’t resemble one another very closely. The sensors aren’t accurate enough and they have too many limitations for any single one to give you an accurate enough profile.

But that’s where WiFiSLAM’s technology starts to get interesting. They use pattern recognition and machine learning to draw correlations between data gathered by all of the sensors in a device, not just one. And they mate that with WiFi trilateration to create accurate indoor maps.

Every time you take a step, for instance, a gyroscope inside a device could record an impact (if it has one). This creates a graph of steps that seems fairly useless on its own. Other sensors are also limited by their natures. An accelerometer can’t measure an angle, just a linear velocity, for instance.

“But people hold phones in front of them, and when you turn your body you get centrifugal acceleration,” Huang explains. This allows them to track anomalies in acceleration that mate with the data from the steps taken with the gyroscope to determine when people are making turns. In fact, WiFiSLAM has done enough work with its pattern recognition to be able to duplicate the quality of readings that you get with a gyroscope using just accelerometers.

“And if you have both of those on the phone,” says Huang, “you can do some really interesting things.”

There were other technologies being leveraged by WiFiSLAM including packet timing that to gather more accurate distances from hotspots (TDOA). They were also playing with using image recognition together with studies of human psychological decision-making to predict the routes you’d take through a building.

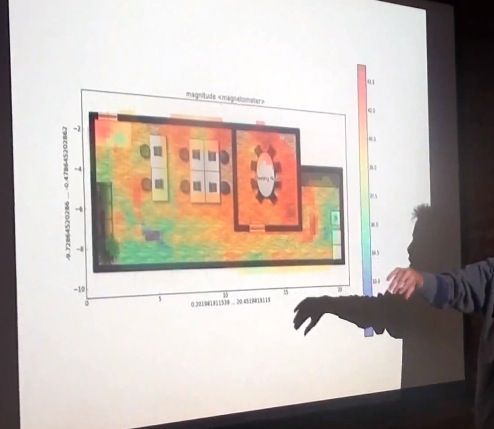

Then there’s the use of magnetometers to take magnetic field readings throughout a building. Poor for outdoor locations, but great for indoor work. Magnetic fields, Huang says, have as much variance and detail as WiFi when it comes to indoor location sensing.

The iPhone 5 and iPhone 4S, it is worth mentioning at this point, have a gyroscope, magnetometer and accelerometers on board.

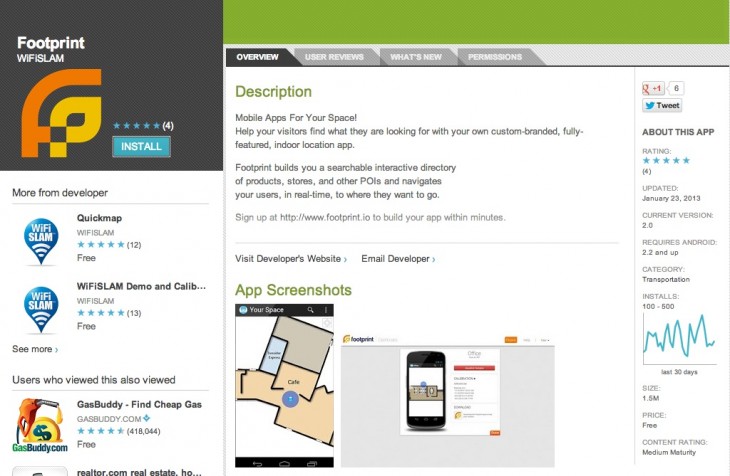

WiFiSLAM actually produced a proof-of-concept app that used accelerometers to navigate indoor maps called Footprint.io. It was published on the Google Play store and last updated in January, but has since been removed.

They were also looking into other areas that would work well with camera-centric devices like Google Glass. These computer vision arenas are still in the nascent stages, but the potential is crazy. Using a video feed as you walk through a building, the device tracks the pixels on the screen to map turns one way or another and forward progress. There’s also visual matching of textures and architectural details across a city in order to identify it, which is really cool.

But it’s safe to say that the internal tracking methods that WiFiSLAM had been developing are the primary interest for Apple. Obviously, it has been using triangulation based on WiFi and cell towers for some time to build out its traffic service.

Apple had been quietly building its own solution for maps since at least 2009. That is when it acquired small but innovative maps company Placebase and started up its own ‘GEO Team’. Since then, Apple has continued to make small purchases in the mapping arena, snagging Google Earth competitor Poly9 in July of 2010 and wicked 3D mapping company C3 Technologies in August of this year. During the location tracking brouhaha in 2011, Apple mentioned that it was gathering anonymous data for an ‘improved traffic service’. It launched that service with its new Maps in iOS 6.

Since then, Maps has taken some heat for its inaccurate database of places. Apple has been working with data providers, teams of its store employees tasked with improving results and other crowd-based sources like the ‘report a problem’ button in the Maps app to boost their accuracy. But indoor mapping, while definitely another area where Apple lags behind other companies like Google, is still very much a horse race.

If Apple were to bring technologies like the ones that WiFiSLAM was working on to the iPhone, it would gain an instant army of remote mapping drones. Everyone carrying an iPhone in a building would be charting at least a passive map of that location. Ever walk around carrying your phone in front of you? Yes, everyone, everyone does this at some point. Imagine if the sensors inside the device were measuring your footfalls and turns and matching it with hundreds of other paths to map out that building while you did so?

WiFiSLAM was very much building on the shoulders of others with its tech, but its unique mating of existing practices with machine learning and sensor leverage was likely attractive to Apple’s M&A team. A small company, without strong ties or a platform play, that brought together obviously brilliant people to hone a specific technology. That fits Apple’s acquisition profile to the T.

But it’s not just mapping that matters when it comes to location. The more accurate a location is the more accurate every app on the device is. Adding in more precision to an iPhone’s indoor location capabilities doesn’t just help Apple, it helps every developer and, hopefully, every user.

Now, we just have to wait to see exactly how Apple applies the tech, and whether it has a material effect on the accuracy of Maps.

Image Credit: Sean Gallup/Getty Images

Get the TNW newsletter

Get the most important tech news in your inbox each week.